Reading List

The most recent articles from a list of feeds I subscribe to.

Safari 16.4 Is An Admission

If you're a web developer not living under a rock, you probably saw last week's big Safari 16.4 reveal. There's much to cheer, but we need to talk about why this mega-release is happening now, and what it means for the future.

But first, the list!

WebKit's Roaring Twenties

Apple's summary combines dozens of minor fixes with several big-ticket items. Here's an overview of the most notable features, prefixed with the year they shipped in Chromium:

- : Web Push for iOS (but only for installed PWAs)

- : PWA Badging API (for unread counts) and

idsupport (making updates smoother) - : PWA installation for third-party browsers (but not to parity with "Smart Banners")

- A bevy of Web Components features, many of which Apple had held up in standards bodies for years1, including:

- : Constructable Stylesheets (important for performance)

- : Form participation and default ARIA role

- : Declarative Shadow DOM for "SSR"

- Myriad small CSS improvements and animation fixes, but also:

- :

<iframe>lazy loading - :

Clear-Site-Datafor Service Worker use at scale - : Web Codecs for video (but not audio)

- : WASM SIMD for better ML and games

- : Compression Streams

- : Reporting API (for learning about crashes and metrics reporting)

- : Screen Orientation & Screen Wake Lock APIs (critical for games)

- : Offscreen Canvas (but only 2D, which isn't what folks really need)

- Critical usability and quality fixes for WebRTC

A number of improvements look promising, but remain exclusive to macOS and iPadOS:

- Fullscreen API fixes

- AVIF and AV1 support

The lack of iOS support for Fullscreen API on <canvas> elements continues to harm game makers; likewise, the lack of AVIF and AV1 holds back media and streaming businesses.

Regardless, Safari 16.4 is astonishingly dense with delayed features, inadvertantly emphasising just how far behind WebKit has remained for many years and how effective the Blink Launch Process has been in allowing Chromium to ship responsibly while consensus was witheld in standards by Apple.

The requirements of that process accelerated Apple's catch-up implementations by mandating proof of developer enthusiasm for features, extensive test suites, and accurate specifications. This collateral put the catch-up process on rails for Apple.

The intentional, responsible leadership of Blink was no accident, but to see it rewarded so definitively is gratifying.

The size of the release was expected in some corners, owing to the torrent of WebKit blog posts over the last few weeks:

- : Web Share changes

- : Form participation for Web Components

- : CSS Nesting (not enabled for Beta)

- : Declarative Shadow DOM

- : User Activation API changes

- : Web Push API for iOS

This is a lot, particularly considering that Apple has upped the pace of new releases to once every eight weeks (or thereabouts) over the past year and a half.

Good Things Come In Sixes

Leading browsers moved to 6-week update cadence by 2011 at the latest, routinely delivering fixes at a quick clip. It took another decade for Apple to finally adopt modern browser engineering and deployment practices.

Starting in September 2021, Safari moved to an eight-week cadence. This is a sea change all its own.

Before Safari 15, Apple only delivered two substantial releases per year, a pattern that had been stable since 2016:

- New features were teased at WWDC in the early summer

- They landed in the Fall alongside a new iOS version

- A second set of small features trickled out the next Spring

For a decade, two releases per year meant that progress on WebKit bugs was a roulette that developers lost by default.

In even leaner years (2012-2015), a single Fall release was all we could expect. This excruciating cadence affected Safari along with every other iOS browser forced to put its badge on Apple's sub-par product.

Contrast Apple's manufactured scarcity around bug fix information with the open bug tracking and reliable candecne of delivery from leading browsers. Cupertino manages the actual work of Safari engineers through an Apple-internal system ("Radar"), making public bug reports a sort of parallel track. Once an issue is imported to a private Radar bug it's more likely to get developer attention, but this also obscures progress from view.

This lack of transparency is by design.

It provides Apple deniability while simultaneously setting low expectations, which are easier to meet. Developers facing showstopping bugs end up in a bind. Without competitive recourse, they can't even recommend a different browser because every iOS browser is forced to use WebKit, meaning every iOS browser is at least as broken as Safari.

Given the dire state of WebKit, and the challenges contributors face helping to plug the gaps, these heartbreaks have induced a learned helplessness in much of the web community. So little improved, for so long, that some assumed it never would.

But here we are, with six releases a year and WebKit accelerating the pace at which it's closing the (large) gap.

What Changed?

Many big-ticket items are missing from this release — iOS fullscreen API for <canvas>, Paint Worklets, true PWA installation APIs for competing browsers, Offscreen Canvas for WebGL, Device APIs (if only for installed web apps), etc. — but the pace is now blistering.

This is the power of just the threat of competition.

Apple's laywers have offered claims in court and in regulatory filings defending App Store rapaciousness because, in their telling, iOS browsers provide an alternative. If developers don't like the generous offer to take only 30% of revenue, there's always Cupertino's highly capable browser to fall back on.

The only problem is that regulators ask follow-up questions like "is it?" and "what do developers think?"

Which they did.

TL;DR: it wasn't, and developers had lots to say.

This is, as they say, a bad look.

And so Apple hedged, slowly at first, but ever faster as 2021 bled into 2022 and the momentum of additional staffing began to pay dividends.

Headcount Is Destiny

Apple had the resources needed to build a world-beating browser for more than a decade. The choice to ship a slower, less secure, less capable engine was precisely that: a choice.

Starting in 2021, Apple made a different choice, opening up dozens of Safari team positions. From 2023 perspective of pervasive tech layoffs, this might look like the same exuberant hiring Apple's competitors recently engaged in, but recall Cupertino had maintained extreme discipline about Safari staffing for nearly two decades. Feast or famine, Safari wouldn't grow, and Apple wouldn't put significant new resourcing into WebKit, no matter how far it fell behind.

The decision to hire aggressively, including some "big gets" in standards-land, indicates more is afoot, and the reason isn't that Tim lost his cool. No, this is a strategy shift. New problems needed new (old) solutions.

Apple undoubtedly hopes that a less egregiously incompetent Safari will blunt the intensity of arguments for iOS engine choice. Combined with (previously winning) security scaremongering, reduced developer pressure might allow Cupertino to wriggle out of needing to compete worldwide, allowing it to ring-fence progress to markets too small to justify browser development resources (e.g., just the EU).

Increased investment also does double duty in the uncertain near future. In scenarios where Safari is exposed to real competition, a more capable engine provides fewer reasons for web developers to recommend other browsers. It takes time to board up the windows before a storm, and if competition is truly coming, this burst of energy looks like a belated attempt to batten the hatches.

It's critical to Apple that narrative discipline with both developers and regulators is maintained. Dilatory attempts at catch-up only work if developers tell each other that these changes are an inevitable outcome of Apple's long-standing commitment to the web (remember the first iPhone!?!). An easily distracted tech press will help spread the idea that this was always part of the plan; nobody is making Cupertino do anything it doesn't want to do, nevermind the frantic regulatory filings and legal briefings.

But what if developers see behind the veil? What if they begin to reflect and internalise Apple's abandonment of web apps after iOS 1.0 as an exercise of market power that held the web back for more than a decade?

That might lead developers to demand competition. Apple might not be able to ring-fence browser choice to a few geographies. The web might threaten Cupertino's ability to extract rents in precisely the way Apple represented in court that it already does.

Early Innings

Rumours of engine ports are afoot. The plain language of the EU's DMA is set to allow true browser choice on iOS. But the regulatory landscape is not at all settled. Apple might still prevent progress from spreading. It might yet sue its way to curtailing the potential size and scope of the market that will allow for the web to actually compete, and if it succeeds in that, no amount of fast catch-up in the next few quarters will pose a true threat to native.

Consider the omissions:

- PWA installation prompting

- Fullscreen for

<canvas> - Real Offscreen Canvas

- Improved codecs

- Web Transport

- WebGPU

- Device APIs

Depending on the class of app, any of these can be a deal-breaker, and if Apple isn't facing ongoing, effective competition it can just reassign headcount to other, "more critical" projects when the threat blows over. It wouldn't be the first time.

So, this isn't done. Not by a long shot.

Safari 16.4 is an admission that competition is effective and that Apple is spooked, but it isn't an answer. Only genuine browser choice will ensure the taps stay open.

FOOTNOTES

Apple's standards engineers have a long and inglorious history of stalling tactics in standards bodies to delay progress on important APIs, like Declarative Shadow DOM (DSD).

The idea behind DSD was not new, and the intensity of developer demand had only increased since Dimitri's 2015 sketch. A 2017 attempt to revive it was shot down in 2018 by Apple engineers without evidence or data.

Throughout this period, Apple would engage sparsely in conversations, sometimes only weighing in at biannual face-to-face meetings. It was gobsmacking to watch them argue that features were unnecessary directly to the developers in the room who were personally telling them otherwise. This was disheartening because a key goal of any proposal was to gain support from iOS. In a world where nobody else could ship-and-let-live, and where Mozilla could not muster an opinion (it did not ship Web Components until late 2018), any whiff of disinterest from Apple was sufficient to kill progress.

The phrase "stop-energy" is often misused, but the dampening effect of Apple on the progress of Web Components after 2015-2016's burst of V1 design energy was palpable. After that, the only Web Components features that launched in leading-edge browsers were those that an engineer and PM were willing to accept could only reach part of the developer base.

I cannot stress enough how effectively this slowed progress on Web Components. The pantomime of regular face-to-face meetings continued, but Apple just stopped shipping. What had been a grudging willingness to engage on new features became a stalemate.

But needs must.

In early 2020, after months of background conversations and research, Mason Freed posted a new set of design alternatives, which included extensive performance research. The conclusion was overwhelming: not only was Declarative Shadow DOM now in heavy demand by the community, but it would also make websites much faster.

The proposal looked shockingly like those sketched in years past. In a world where

<template>existed and Shadow DOM V1 had shipped, the design space for Declarative Shadow DOM alternatives was not large; we just needed to pick one.An updated proposal was presented to the Web Components Community Group in March 2020; Apple objected on spurious grounds, offering no constructive counter.2

Residual questions revolved around security implications of changing parser behaviour, but these were also straightforward. The first draft of Mason's Explainer even calls out why the proposal is less invasive than a whole new element.

Recall that Web Components and the

<template>element themselves were large parser behaviour changes; the semantics for<template>even required changes to the long-settled grammar of XML (long story, don't ask). A drumbeat of (and proposals for) new elements and attributes post-HTML5 also represent identical security risks, and yet we barrel forward with them. These have notably included<picture>,<portal>(proposed),<fencedframe>(proposed),<dialog>,<selectmenu>(proposed), and<img srcset>.The addition of

<template shadowroot="open">would, indeed, change parser behaviour, but not in ways that were unknowably large or unprecedented. Chromium's usage data, along with the HTTP Archive crawl HAR file corpus, provided ample evidence about the prevalence of patterns that might cause issues. None were detected.And yet, at TPAC 2020, Apple's representatives continued to press the line that large security issues remained. This was all considered at length. Google's security teams audited the colossal volume of user-generated content Google hosts for problems and did not find significant concerns. And yet, Apple continued to apply stop-energy.

The feature eventually shipped with heavy developer backing as part of Chromium 90 in April 2021 but without consensus. Apple persistently repeated objections that had already been answered with patient explication and evidence.

Cupertino is now implementing this same design, and Safari will support DSD soon.

This has not been the worst case of Apple's deflection and delay — looking at you, Push Notifications — but serves as an exemplar. Problem solvers have been forced into a series of high-stakes gambits to solve developer problems by Apple (and, to a lesser extent, Mozilla) over Cupertino's dozen years of engine disinvestment.

Even in Chromium, DSD was delayed by several quarters. Because of the Apple Browser Ban, cross-OS availability was postponed by two years. The fact that Apple will ship DSD without changes and without counterproposals across the long arc of obstruction implies claims of caution were, at best, overstated.

The only folks to bring data to the party were Googlers and web developers. No new thing was learned through groundless objection. No new understanding was derived from the delay. Apple did no research about the supposed risks. It has yet to argue why it's safe now, but wasn't then.

So let's call it what it was: concern trolling.

Uncritical acceptance of the high-quality design it had long delayed is an admission, of sorts. It demonstrates Apple's ennui about developer and user needs (until pressed), paired with great skill at deflection.

The playbook is simple:

- Use opaque standards processes to make it look like occasional attendance at a F2F meeting is the same thing as good-faith co-engineering.

- "Just ask questions" when overstretched or uninterested in the problem.

- Spread FUD about the security or privacy of a meticulously-vetted design.

- When all else fails, say you will formally object and then claim that others are "shipping whatever they want" and "not following standards" when they carefully launch a specced and tested design you were long consulted about, but withheld good faith engagement to improve.

The last step works because only insiders can distinguish between legitimate critiques and standards process jockeying. Hanging the first-mover risk around the neck of those working to solve problems is nearly cost-free when you can also prevent designs from moving forward in standards, paired with a market veto (thaks to anti-competitive shenanigans).

Play this dynamic out over dozens of features across a decade, and you'll better understand why Chromium participants get exercised about the responsibility theatre various Apple engineers put on to avoid engaging substantively, while simultaneously blocking all forward movement. Understood in context, it decodes as delay and deflection; a way to avoid using standards bodies to help actually solve problems.

Cupertino has paid no price for deploying these smoke screens, thanks to the Apple Browser Ban and a lack of curiosity in the press. Without those shields, Apple engineers would have had to offer convincing arguments from data for why their positions were correct. Instead, they have whatabouted for over three years, only to suddenly implement proposals they recently opposed when the piercing gaze of regulators finally fell on WebKit.3 ⇐

The presence or absence of a counterproposal when objecting to a design is a primary indicator of seriousness within a standards discussion. All parties will have been able to examine proposals before any meeting, and in groups that operate by consensus, blocking objections are understood to be used sparingly by serious parties.

It's normal for disagreements to surface over proposed designs, but engaged and collaborative counter-parties will offer soft concerns – "we won't block on this, but we think it could be improved..." – or through the offer to bring a counterproposal. The benefits of a concrete counter are large. It demonstrates good faith in working to solve the problem and signals a willingness to ship the offered design. Threats to veto, or never implement a specific proposal, are just not done in the genteel world of web standards.

Over the past decade, making veto threats while offering neither data nor a counterproposal have become a hallmark of Apple's web standards footprint. It's a bad look, but it continues because nobody in those rooms wants to risk pissing off Cupertino. Your narrator considered a direct accounting of just the consequences of these tactics a potentially career-ending move; that's how serious the stakes are.

The true power of a monopoly in standards is silence — the ability to get away with things others blanch at because they fear you'll hold an even larger group of hostages next time. ⇐

Apple has rolled out the same playbook in dozens of areas over the last decade, and we can learn a few things from this experience.

First, Apple corporate does not care about the web, no matter how much the individuals that work on WebKit (deeply) care. Cupertino's artificial bandwidth constraints on WebKit engineering ensured that it implements only when pressured.

That means that external pressure must be maintained. Cupertino must fear losing their market share for doing a lousy job. That's a feeling that hasn't been felt near the intersection of I-280 and CA Route 85 in a few years. For the web to deliver for users, gatekeepers must sleep poorly.

Lastly, Apple had the capacity and resources to deliver a richer web for a decade but simply declined. This was a choice — a question of will, not of design correctness or security or privacy.

Safari 16.4 is evidence, an admission that better was possible, and the delaying tactics were a sort of gaslighting. Apple disrespects the legitimate needs of web developers when allowed, so it must not be.

Lack of competition was the primary reason Apple feared no consequence for failing to deliver. Apple's protectionism towards Safari's participation-prize under-achievement hasn't withstood even the faintest whiff of future challengers, which should be an enduring lesson: no vendor must ever be allowed to deny true and effective browser competition. ⇐

The Market for Lemons

For most of the past decade, I have spent a considerable fraction of my professional life consulting with teams building on the web.

It is not going well.

Not only are new services being built to a self-defeatingly low UX and performance standard, existing experiences are pervasively re-developed on unspeakably slow, JS-taxed stacks. At a business level, this is a disaster, raising the question: "why are new teams buying into stacks that have failed so often before?"

In other words, "why is this market so inefficient?"

George Akerlof's most famous paper introduced economists to the idea that information asymmetries distort markets and reduce the quality of goods because sellers with more information can pass off low-quality products as more valuable than informed buyers appraise them to be. (PDF, summary)

Customers that can't assess the quality of products pay too much for poor quality goods, creating a disincentive for high-quality products to emerge while working against their success when they do. For many years, this effect has dominated the frontend technology market. Partisans for slow, complex frameworks have successfully marketed lemons as the hot new thing, despite the pervasive failures in their wake, crowding out higher-quality options in the process.1

These technologies were initially pitched on the back of "better user experiences", but have utterly failed to deliver on that promise outside of the high-management-maturity organisations in which they were born.2 Transplanted into the wider web, these new stacks have proven to be expensive duds.

The complexity merchants knew their environments weren't typical, but sold their highly specialised tools to folks shopping for general purpose solutions anyway. They understood most sites lack latency budgeting, dedicated performance teams, hawkish management reviews, ship gates to prevent regressions, and end-to-end measurements of critical user journeys. They grasped that massive investment in controlling complexity is the only way to scale JS-driven frontends, but warned none of their customers.

They also knew that their choices were hard to replicate. Few can afford to build and maintain 3+ versions of a site ("desktop", "mobile", and "lite"), and vanishingly few web experiences feature long sessions and login-gated content.3

Armed with this knowledge, they kept the caveats to themselves.

What Did They Know and When Did They Know It?

This information asymmetry persists; the worst actors still haven't levelled with their communities about what it takes to operate complex JS stacks at scale. They did not signpost the delicate balance of engineering constraints that allowed their products to adopt this new, slow, and complicated tech. Why? For the same reason used car dealers don't talk up average monthly repair costs.

The market for lemons depends on customers having less information than those selling shoddy products. Some who hyped these stacks early on were earnestly ignorant, which is forgivable when recognition of error leads to changes in behaviour. But that's not what the most popular frameworks of the last decade did.

As time passed, and the results continued to underwhelm, an initial lack of clarity was revealed to be intentional omission. These omissions have been material to both users and developers. Extensive evidence of these failures was provided directly to their marketeers, often by me. At some point (certainly by 2017) the omissions veered into intentional prevarication.

Faced with the dawning realisation that this tech mostly made things worse, not better, the JS-industrial-complex pulled an Exxon.

They could have copped to an honest error, admitted that these technologies require vast infrastructure to operate; that they are unscalable in the hands of all but the most sophisticated teams. They did the opposite, doubling down, breathlessly announcing vapourware year after year to forestall critical thinking about fundamental design flaws. They also worked behind the scenes to marginalise those who pointed out the disturbing results and extraordinary costs.

Credit where it's due, the complexity merchants have been incredibly effective in one regard: top-shelf marketing discipline.

Over the last ten years, they have worked overtime to make frontend an evidence-free zone. The hucksters knew that discussions about performance trade-offs would not end with teams investing more in their technology, so boosterism and misdirection were aggressively substituted for evidence and debate. Like a curtain of Halon descending to put out the fire of engineering dialogue, they blanketed the discourse with toxic positivity. Those who dared speak up were branded "negative" and "haters", no matter how much data they lugged in tow.

Sandy Foundations

Astonishingly, gobsmackingly effective bullshit, but nonsense nonetheless. There was a point to it, though. Playing for time allowed the bullshitters to punt introspection of the always-wrong assumptions they'd built their entire technical ediface on:

- CPUs get faster every year (they do not)

- Organisations can manage these complex stacks (they cannot)

In time, these misapprehensions would become cursed articles of faith.

All of this was falsified by 2016, but nobody wanted to turn on the house lights while the JS party was in full swing. Not the developers being showered with shiny tools and boffo praise for replacing "legacy" HTML and CSS that performed fine. Not the scoundrels peddling foul JavaScript elixirs and potions. Not the managers that craved a check to cut and a rewrite to take credit for in lieu of critical thinking about user needs and market research.

Consider the narrative Crazy Ivans that led to this point.

It's challenging to summarise a vast discourse over the span of a decade, particularly one as dense with jargon and acronyms as that which led to today's status quo of overpriced failure. These are not quotes, but vignettes of distinct epochs in our tortured journey:

-

"Progressive Enhancement has failed! Multiple pages are slow and clunky!

SPAs are a better user experience, and managing state is a big problem on the client side. You'll need a tool to help structure that complexity when rendering on the client side, and our framework works at scale"

-

"Instead of waiting on the JavaScript that will absolutely deliver a superior SPA experience...someday...why not render on the server as well, so that there's something for the user to look at while they wait for our awesome and totally scalable JavaScript to collect its thoughts?"

[ an intro to "isomorphic javascript", a.k.a. "Server-Side Rendering", a.k.a. "SSR" ]

-

"SPAs are a better experience, but everyone knows you'll need to do all the work twice because SSR makes that better experience minimally usable. But even with SSR, you might be sending so much JS that things feel bad. So give us credit for a promise of vapourware for delay-loading parts of your JS."

-

"SPAs are a better experience. SSR is vital because SPAs take a long time to start up, and you aren't using our vapourware to split your code effectively. As a result, the main thread is often locked up, which could be bad?"

Anyway, this is totally your fault and not the predictable result of us failing to advise you about the controls and budgets we found necessary to scale JS in our environment. Regardless, we see that you lock up main threads for seconds when using our slow system, so in a few years we'll create a parallel scheduler that will break up the work transparently"

[ 2017's beautiful overview of a fated errand and 2018's breathless re-brand ]

-

"The scheduler isn't ready, but thanks for your patience; here's a new way to spell your component that introduces new timing issues but doesn't address the fact that our system is incredibly slow, built for browsers you no longer support, and that CPUs are not getting faster"

-

"Now that you're 'SSR'ing your SPA and have re-spelt all of your components, and given that the scheduler hasn't fixed things and CPUs haven't gotten faster, why not skip SPAs and settle for progressive enhancement of sections of a document?"

[ "islands", "server components", etc. ]

It's the Steamed Hams of technology pitches.

Like Chalmers, teams and managers often acquiesce to the contradictions embedded in the stacked rationalisations. Together, the community invented dozens of reasons to look the other way, from the theoretically plausible to the fully imaginary.

But even as the complexity merchant's well-intentioned victims meekly recite the koans of trickle-down UX — it can work this time, if only we try it hard enough! — the evidence mounts that "modern" web development is, in the main, an expensive failure.

The baroque and insular terminology of the in-group is a clue. It's functional purpose (outside of signalling) is to obscure furious plate spinning. The tech isn't working, but admitting as much would shrink the market for lemons.

You'd be forgiven for thinking the verbiage was designed to obfuscate. Little comfort, then, that folks selling new approaches must now wade through waist-deep jargon excrement to argue for the next increment of complexity.

The most recent turn is as predictable as it is bilious. Today's most successful complexity merchants have never backed down, never apologised, and never come clean about what they knew about the level of expense involved in keeping SPA-oriented technologies in check. But they expect you'll follow them down the next dark alley anyway:

And why not? The industry has been down to clown for so long it's hard to get in the door if you aren't wearing a red nose.

The substitution of heroic developer narratives for user success happened imperceptibly. Admitting it was a mistake would embarrass the good and the great alike. Once the lemon sellers embed the data-light idea that improved "Developer Experience" ("DX") leads to better user outcomes, improving "DX" became and end unto itself. Many who knew better felt forced to play along.

The long lead time for falsifying trickle-down UX was a feature, not a bug; they don't need you to succeed, only to keep buying.

As marketing goes, the "DX" bait-and-switch is brilliant, but the tech isn't delivering for anyone but developers.4 The highest goal of the complexity merchants is to put brands on showcase microsites and to make acqui-hiring failing startups easier. Performance and success of the resulting products is merely a nice-to-have.

Denouement

You'd think there would be data, that we would be awash in case studies and blog posts attributing product success to adoption of SPAs and heavy frameworks in an incontrovertable way.

And yet, after more than a decade of JS hot air, the framework-centric pitch is still phrased in speculative terms because there's no there there. The complexity merchants can't cop to the fact that management competence and lower complexity — not baroque technology — are determinative of product and end-user success.

The simmering, widespread failure of SPA-premised approaches has belatedly forced the JS colporteurs to adapt their pitches. In each iteration, they must accept a smaller rhetorical lane to explain why this stack is still the future.

The excuses are running out.

At long last, the journey has culminated with the rollout of Core Web Vitals. It finally provides an objective quality measurement that prospective customers can use to assess frontend architectures.

It's no coincidence the final turn away from the SPA justification has happened just as buyers can see a linkage between the stacks they've bought and the monetary outcomes they already value; namely SEO. The objective buyer, circa 2023, will understand heavy JS stacks as a regrettable legacy, one that teams who have hollowed out their HTML and CSS skill bases will pay for dearly in years to come.

No doubt, many folks who know their JS-first stacks are slow will do as Akerlof predicts, and obfuscate for as long as possible. The market for lemons is, indeed, mostly a resale market, and the excesses of our lost decade will not be flushed from the ecosystem quickly. Beware tools pitching "100 on Lighthouse" without checking the real-world Core Web Vitals results.

Shrinkage

A subtle aspect of Akerlof's theory is that markets in which lemons dominate eventually shrink. I've warned for years that the mobile web is under threat from within, and the depressing data I've cited about users moving to apps and away from terrible web experiences aligns with that theory.

When websites feel like categorically worse experiences to the folks who write the checks, why should anyone expect them to spend a lot on them? And when websites stop being where accurate information and useful services are, will anyone still believe there's a future in web development?

The lost decade we've suffered at the hands of lemon purveyors isn't just a local product travesty; it's also an ecosystem-level risk. Forget AI putting web developers out of jobs; JS-heavy web stacks have been shrinking the future market for your services for years.

Adam Smith's invisible hand — the idea that free markets lead to efficiency as if guided by unseen forces — is invisible, at least in part, because it is not there.

But dreams die hard.

I'm already hearing laments from folks who have been responsible citizens of framework-landia lo these many years. Oppressed as they were by the lemon vendors, they worry about babies being throw out with the bathwater, and I empathise. But for the sake of users, and for the new opportunities for the web that will open up when experiences finally improve, I say "chuck those tubs".

Chuck 'em hard, and post the photos of the unrepentant bastards that tried to palm off this nonsense behind the cash register.

We lost a decade to smooth talkers and hollow marketeering; folks who failed the most basic test of intellectual honesty: signposting known unknowns. Instead of engaging honestly with the emerging evidence, they sold lemons and shrunk the market for better solutions. Furiously playing catch-up to stay one step ahead of market rejection, frontend's anguished, belated return to quality has been hindered at every step by those who would stand to lose if their false premises and hollow promises were to be fully re-evaluated.

Toxic mimicry and recalcitrant ignorance must not be rewarded.

Vendor's random walk through frontend choices may eventually lead them to be right twice a day, but that's not a reason to keep following their lead. No, we need to move our attention back to the folks that have been right all along. The people who never gave up on semantic markup, CSS, and progressive enhancement for most sites. The people who, when slinging JS, have treated it as special occasion food. The tools and communities whose culture puts the user ahead of the developer and hold evidence of doing better for users in the highest regard.1:1

It's not healing, and it won't be enough to nurse the web back to health, but tossing the Vercels and the Facebooks out of polite conversation is, at least, a start.

Deepest thanks to Bruce Lawson, Heydon Pickering, Frances Berriman, and Taylor Hunt for their thoughtful feedback on drafts of this post.

FOOTNOTES

You wouldn't know it from today's frontend discourse, but the modern era has never been without high-quality alternatives to React, Angular, Ember, and other legacy desktop-era frameworks.

In a bazaar dominated by lemon vendors, many tools and communities have been respectful of today's mostly-mobile users at the expense of their own marketability. These are today's honest brokers and they deserve your attention far more than whatever solution to a problem created by React that the React community is on about this week.

This has included JS frameworks with an emphasis on speed and low overhead vs. cadillac comfort of first-class IE8 support:

It's possible to make slow sites with any of these tools, but the ethos of these communities is that what's good for users is essential while developer luxuries are nice-to-have — even as they compete furiously for developer attention. This uncompromising focus on real quality is what has been muffled under the blanket complexity merchants have thrown over today's frontend discourse.

Similarly, the SPA orthodoxy that precipitated the market for frontend lemons has been challenged both by the continued success of "legacy" tools like WordPress, as well as a new crop of HTML-first systems that provide JS-friendly authoring but output that's largely HTML and CSS:

The essential thing about tools that succeed more often than not is starting with simple output. The difficulty in managing what you've explicitly added based on incremental need, vs. what you've been bequeathed by an inscrutable Rube Goldberg-esque metaframework, is an order of magnitude in cost and usability. Teams that adopt tools with simpler default output start with simpler problems that tend to have better-understood solutions. ⇐ ⇐

Organisations that manage their systems (not the other way around) can succeed with any set of tools. They might pick some elegant ones and some awkward ones, but the sine qua non of their success isn't what they pick up, it's how they hold it.

Recall that Facebook became a multi-billion dollar, globe-striding colossus using PHP and C++.

The differences between FB and your applications are likely legion. This is why it's fundamentally lazy and wrong for TLs and PMs to accept any sort of argument along the lines of "X scales, FB uses it".

Pigs can fly; it's only matter of how much force you apply — but if you aren't willing to fund creation of a large enough trebuchet, it's unlikley that porcine UI will take wing in your organisation. ⇐

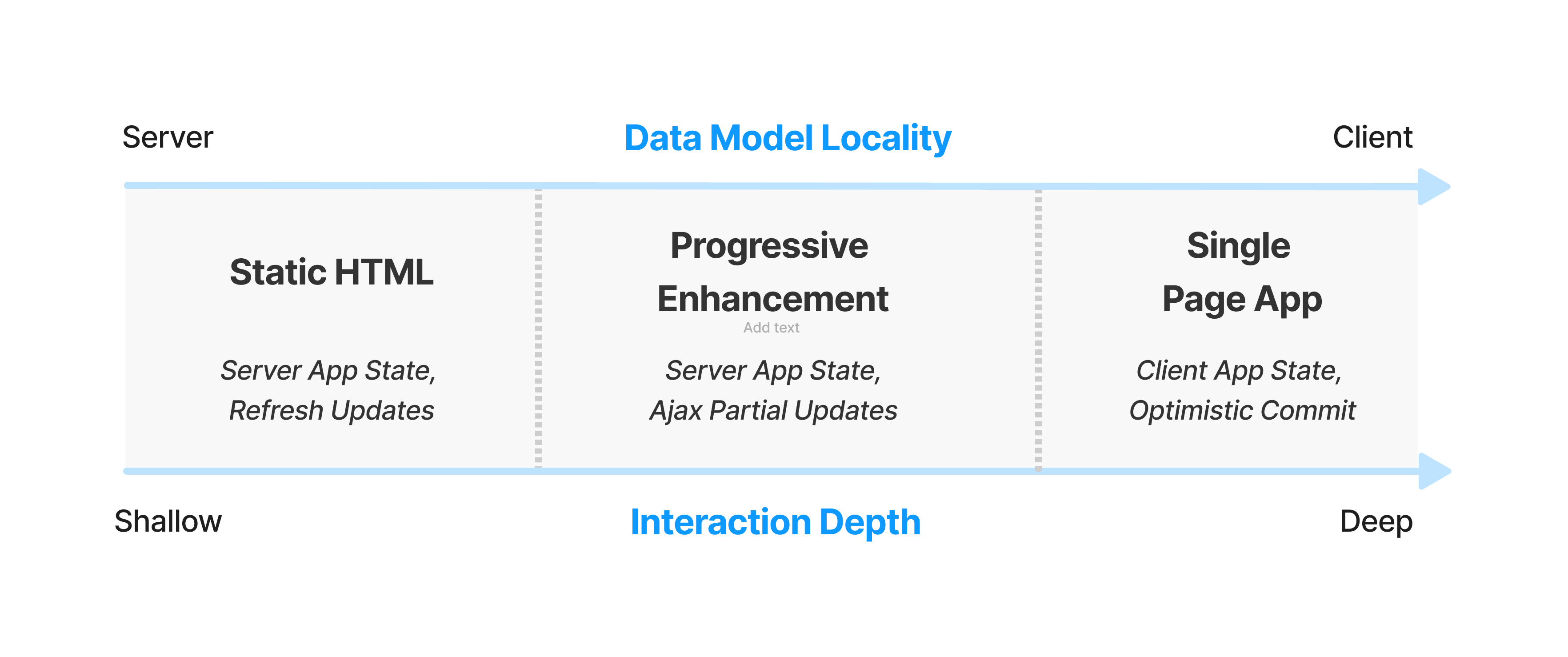

I hinted last year at and under-developed model for how we can evolve our discussion around web performance to take account of the larger factors that distinguish different kinds of sites.

While it doesn't account for many corner-cases, and is insufficient on its own to describe multi-modal experiences like WordPress (a content-producing editor for a small fraction of important users vs. shallow content-consumption reader experience for most), I wind up thinking about the total latency incurred in a user's session divided by the number of interactions. This raises a follow-on question: what's an interaction? Elsewhere, I've defined it as "turns through the interaction loop", but can be more easily described as "taps or clicks that involve your code doing work". This helpfully excludes scrolling, but includes navigations.

ANYWAY, all of this nets out a session-depth weighted intuition about when and where heavyweight frameworks make sense to load up-front:

Sites with shorter average sessions can afford less JS up-front. Social media sites that gate content behind a login (and can use the login process to pre-load bundles), and which have tons of data about session depth — not to mention ML-based per-user bundling, staffed performance teams, ship gates to prevent regressions, and the funding to build and maintain at least 3 different versions of the site — can afford to make fundamentally different choices about how much to load up-front and for which users.

The rest of us, trying to serve all users from a single codebase, need to prefer conservative choices that align with our management capacity to keep complexity in check. ⇐

The "DX" fixation hasn't even worked for developers, if we're being honest. Teams I work with suffer eye-watering build times, shockingly poor code velocity, mysterious performance cliffs, and some poor sod stuck in a broom closet that nobody bothers, lest the webs stop packing.

And yet, these same teams are happy to tell me they couldn't live without the new ball-and-chain.

One group, after weeks of debugging a particularly gnarly set of issues brought on by their preposterously inefficient "CSS-in-JS" solution, combined with React's penchant for terrible data flow management, actually said to me that they were so glad they'd moved everything to hooks because it was "so much cleaner" and that "CSS-in-JS" was great because "now they could reason about it"; nevermind the weeks they'd just lost to the combination of dirtier callstacks and harder to reason about runtime implications of heisenbug styling.

Nothing about the lived experience of web development has meaningfully improved, except perhaps for TypeScript adding structure to large codebases. And yet, here we are. Celebrating failure as success while parroting narratives about developer productivity that have no data to back them up.

Sunk-cost fallacy rules all we survey. ⇐

The Performance Inequality Gap, 2023

Last month, I had the honour of joining what seemed like the entire web performance community at performance.now() in Amsterdam.

The talks are up on YouTube behind a paywall, but my slides are mirrored here1:

The talk, like this post, is an update on network and CPU realities this series has documented since 2017. More importantly, it is also a look at what the latest data means for our collective performance budgets.

2023 Content Targets

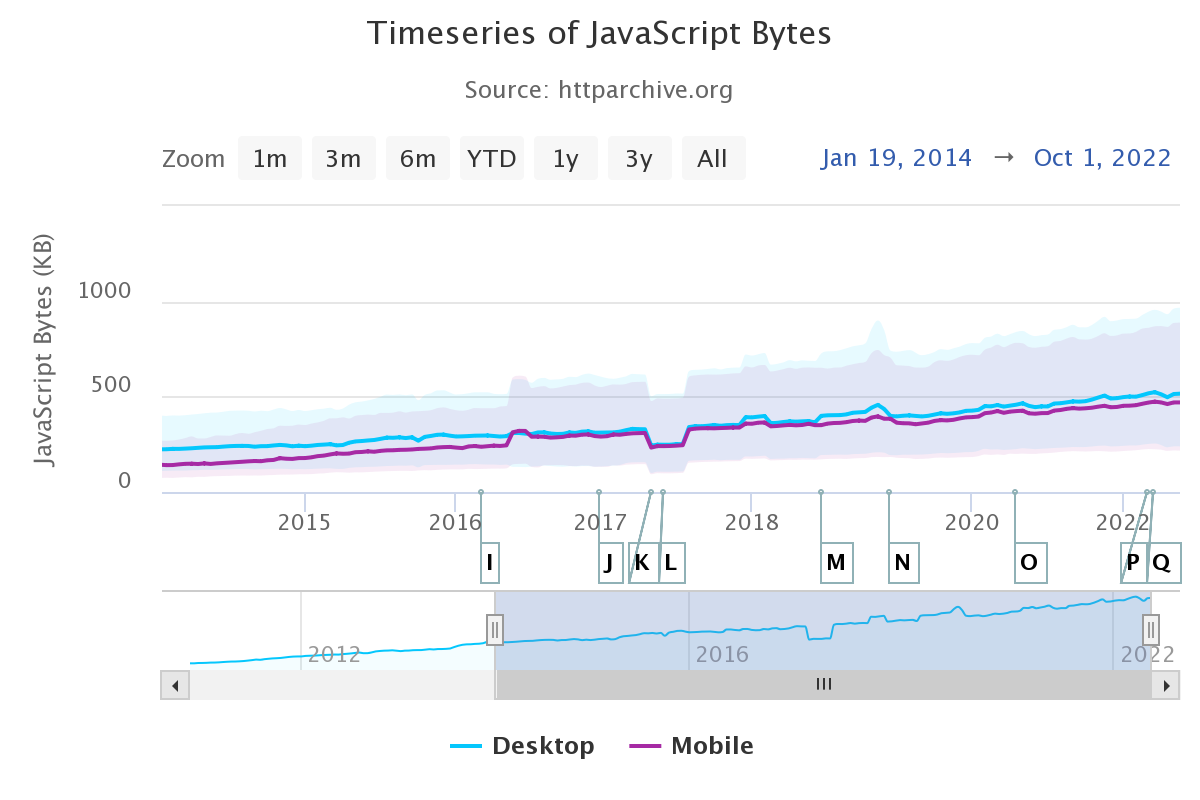

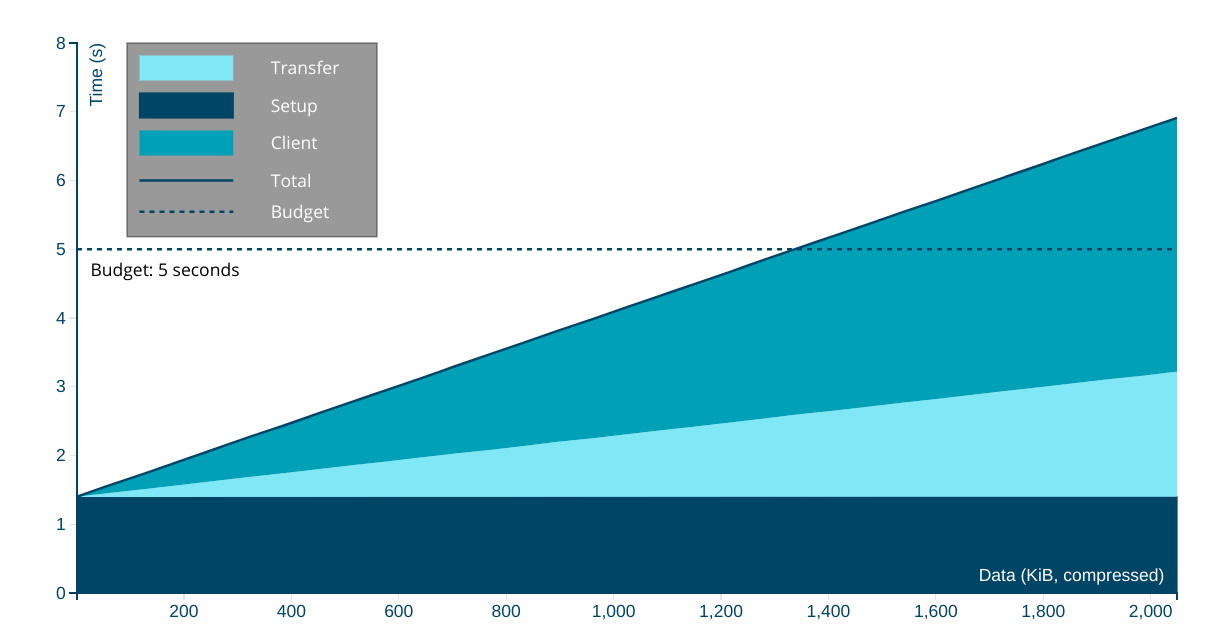

In the interest of brevity, here's what we should be aiming to send over the wire per page in 2023 to reach interactivity in less than 5 seconds on first load:23

- ~150KiB of HTML, CSS, images, and render-blocking font resources

- No more than ~300-350KiB of JavaScript

This implies a heavy JS payload, which most new sites suffer from for reasons both bad and beyond the scope of this post. With a more classic content profile — mostly HTML and CSS — we can afford much more in terms of total data, because JavaScript is still the costliest way to do things and CPUs at the global P75 are not fast.

These estimates also assume some serving discipline, including:

- No more than two HTTP connections, implying HTTP/2

- Compressing text resources

- Reasonable content structure

These targets are anchored to global estimates for networks and devices at the 75th percentile4.

More on how those estimates are constructed in a moment, but suffice to say, it's messy. Where the data is weak, we should always prefer conservative estimates.

Based on trends and historical precedent, there's little reason for optimism that things are better than they seem. Indeed, misplaced optimism about disk, network, and CPU resources is the background music to frontend's lost decade.

It is not an exaggeration to say that modern frontend is so enamoured of post-scarcity fairy tales that it is mortgaging the web's future for another night drinking at the JavaScript party.

We're burning our inheritance and polluting the ecosystem on shockingly thin, perniciously marketed claims of "speed" and "agility" and "better UX" that have not panned out at all. Instead, each additional layer of JavaScript cruft has dragged us further from living within the limits of what we can truly afford.

This isn't working for users or for businesses that hire developers hopped up Facebook's latest JavaScript hopium. A correction is due.

Desktop

In years past, I haven't paid as much attention to the situation on desktops. But researching this year's update has turned up sobering facts that should colour our collective understanding.

Devices

From Edge's telemetry, we see that nearly half of devices fall into our "low-end" designation, which means that they have:

- HDDs (not SSDs)

- 2-4 CPU cores

- 4GB RAM or less

Add to this the fact that desktop devices have a lifespan between five and eight years, on average. This means the P75 device was sold in 2016.

As this series has emphasised in years past, Average Selling Price (ASP) is performance destiny. To understand our P75 device, we must imagine what the ASP device was at the P75 age.5 That is, what was the average device in 2016? It sure wasn't a $2,000 M1 MacBook Pro, that's for sure.

No, it was a $600-$700 device. Think (best-case) 2-core, 4-thread married to slow, spinning rust.

Networks

Desktop-attached networks are hugely variable worldwide, including in the U.S., where the shocking effects of digital red-lining continue this day. And that's on top of globally uncompetitive service, thanks to shockingly lax regulation and legalised corruption.

As a result, we are sticking to our conservative estimates for bandwidth in line with WebPageTest's throttled Cable profile of 5Mbps bandwidth and ~25ms RTT.

Speeds will be much slower than advertised in many areas, particularly for rural users.

Mobile

We've been tracking the mobile device landscape more carefully over the years and, as with desktop, ASPs today are tomorrow's performance destiny. Thankfully, device turnover is faster, with the average handset surviving only three to four years.

Devices

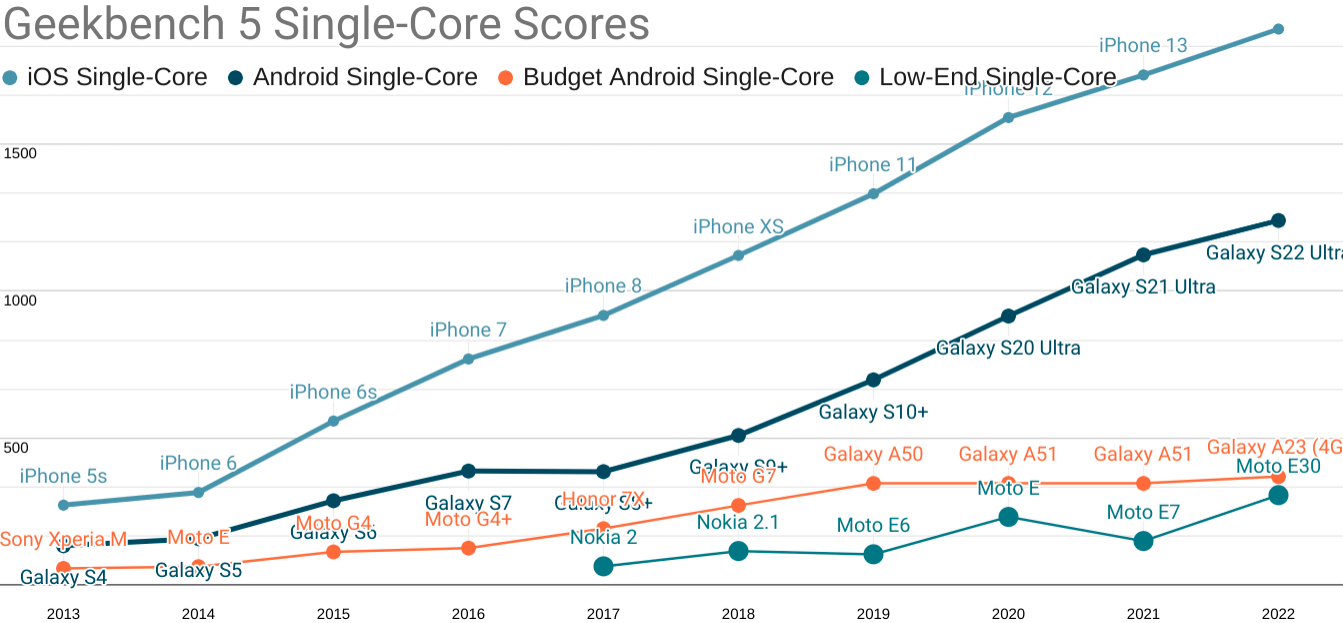

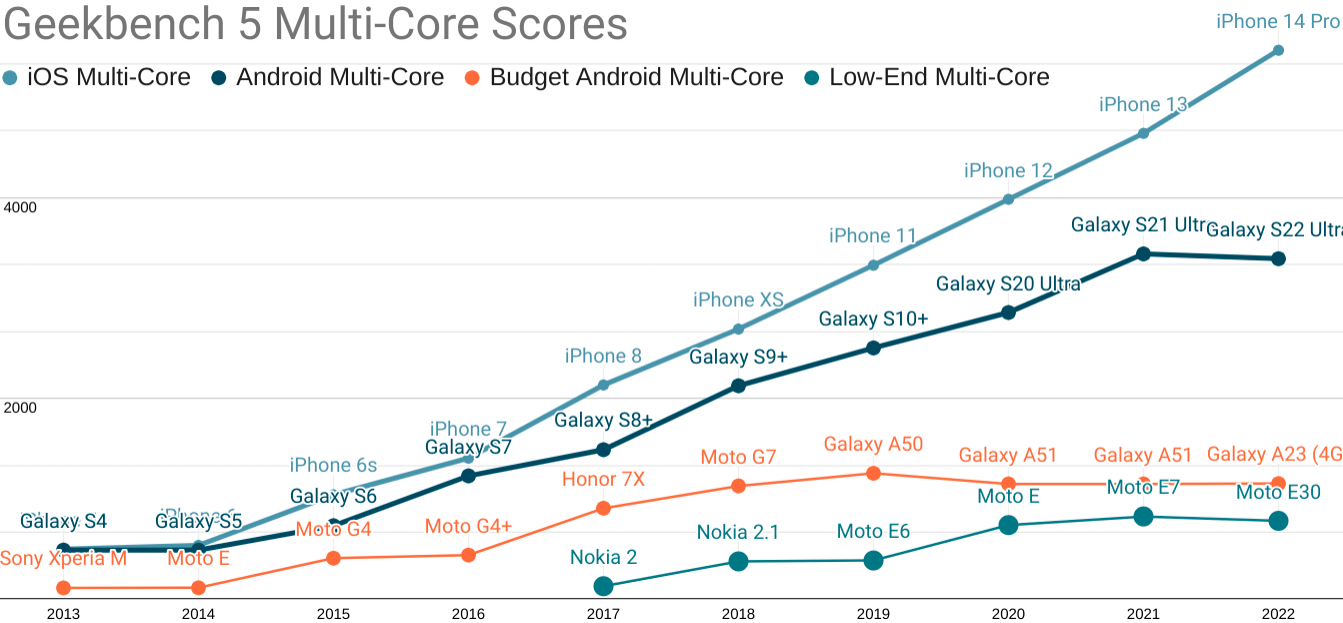

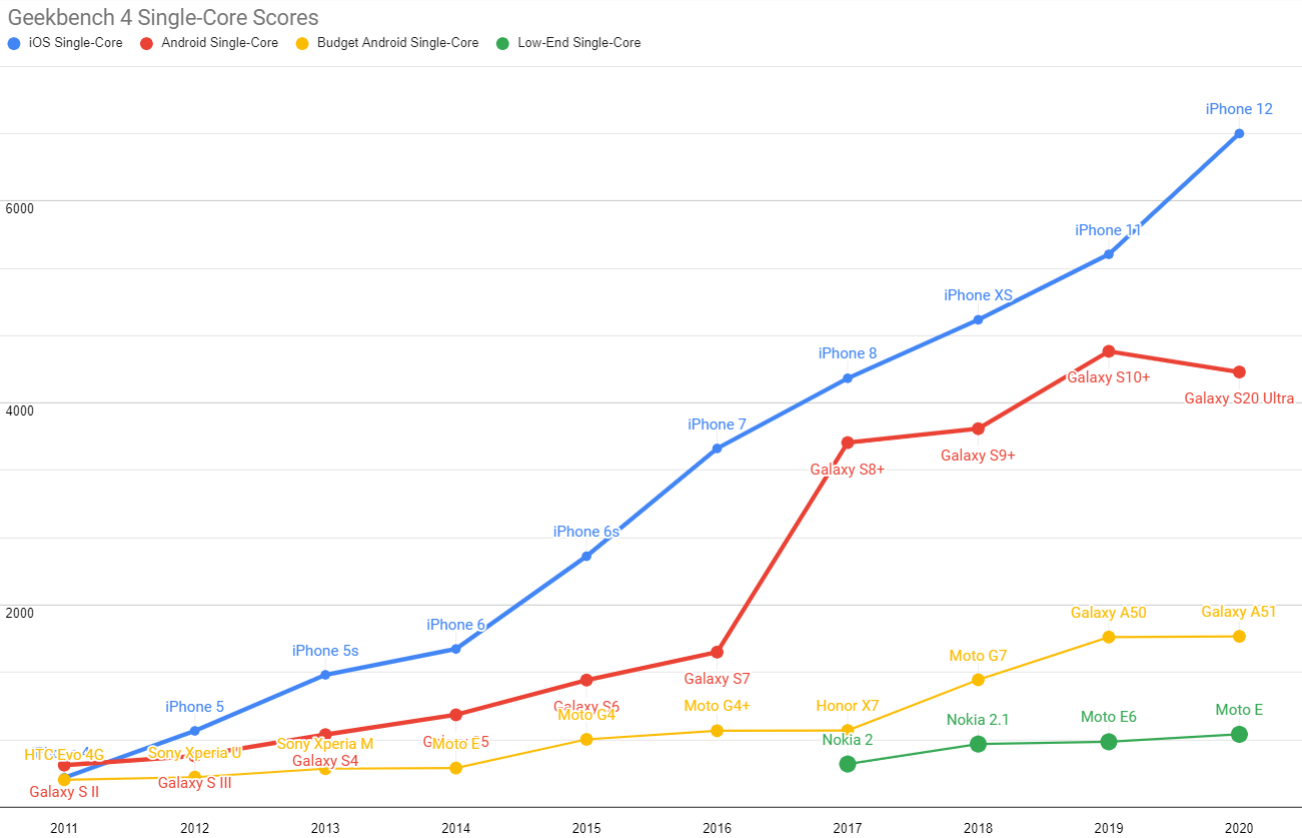

Without beating around the bush, our ASP 2019 device was an Android that cost between $300-$350, new and unlocked. It featured poor single and multi-core performance, and the high-end experience has continued to pull away from it since:

Updated Geekbench five single-core scores for each mobile price point. TL;DR: your iPhone isn't real life.

Android ecosystem SoCs fare slightly better on multi-core performance, but the Performance Inequality Gap is growing there, too.

As you can see, the gap is widening, in part because the high end has risen dramatically in price.

The best analogue you can buy for a representative P75 device today are ~$200 Androids from the last year or two, such as the Samsung Galaxy A50 and the Nokia G11.

These devices feature:

- Eight slow, big.LITTLE ARM cores (A75+A55, or A73+A53) built on last-generation processes with very little cache

- 4GiB of RAM

- 4G radios

These are depressingly similar specs to devices I recommended for testing in 2017. Qualcomm has some 'splainin to do.

5G is still in its early diffusion phase, and the inclusion of a 5G radio is hugely randomising for device specs at today's mid-market price-point. It'll take a couple of years for that to settle.

Networks

Trustworthy mobile network data is challenging to acquire. Geographic differences create huge effects that we can see as variability in various global indexes. This variance forces us towards the bottom of the range when estimating our baseline, as mobile networks are highly contextual.

Triangulating from both speedtest.net and OpenSignal data (which has declined markedly in usefuleness), we're also going to maintain our global network baseline from last year:

- 9Mbps bandwidth

- 170ms RTT

This is a higher bandwidth estimate than might be reasonable, but also a higher RTT to cover the effects of high network behaviour variance. I'm cautiously optimistic that we'll be able to bump one or both of these numbers in a positive direction next year. But they stay put for now.

Developing Your Own Targets

You don't have to take my word for it. If your product behavior or your own team's data or market research suggests different tradeoffs, then it's only right to set your own per-product baseline.

For example, let's say you send more HTML and less JavaScript, or your serving game is on lock and all critical assets load over a single H/2 link. How should your estimates change?

Per usual, I've also updated the rinky-dink live model that you can use to select different combinations of device, network, and content type.

The Performance Inequality Gap is Growing

Essential public services are now delivered primarily through digital channels in many countries. This means what the frontend community celebrates and promotes has a stochastic effect on the provision of those services — which leads to an uncomfortable conversation because, taken as a whole, it isn't working.

Pervasively poor results are part of why responsible public sector organisations are forced to develop HTML-first, progressive enhancement guidance in stark opposition to the "frontend consensus".

This is an indictment: modern frontend's fascination with towering piles of JavasScript complexity is not delivering better experiences for most users.

For a genuinely raw example, consider California, the state where I live. In early November, it was brought to my attention that CA.gov "felt slow", so I gave it a look. It was bad on my local development box, so I put it under the WebPageTest microscope. The results were, to be blunt, a travesty.

How did this happen? Well, per the new usual, overly optimistic assumptions about the state of the world accreted until folks at the margins were excluded.

In the case of CA.gov, it was an official Twitter embed that, for some cursed reason, had been built using React, Next.js, and the full parade of modern horrors. Removing the embed, along with code optimistically built in a pre-JS-bloat era that blocked rendering until all resources were loaded, resulted in a dramatic improvement:

This is not an isolated incident. These sorts of disasters have been arriving on my desk with shocking frequency for years.

Nor is this improvement a success story, but rather a cautionary tale about the assumptions and preferences of those who live inside the privilege bubble. When they are allowed to set the agenda, folks who are less well-off get hurt.

It wasn't the embed engineer getting paid hundreds of thousands of dollars a year to sling JavaScript who was marginalised by this gross misapplication of overly complex technology. No, it was Californians who could least afford fast devices and networks who were excluded. Likewise, it hasn't been those same well-to-do folks who have remediate the resulting disasters. They don't even clean up their own messes.

Frontend's failure to deliver in today's mostly-mobile, mostly-Android world is shocking, if only for the durability of the myths that sustain the indefensible. We can't keep doing this.

As they say, any trend that can't continue won't.

FOOTNOTES

Apologies for the lack of speaker notes in this deck. If there's sufficient demand, I can go back through and add key points. Let me know if that would help you or your team over on Mastodon. ⇐

Since at least 2017, I've grown increasingly frustrated at the way we collectively think about the tradeoffs in frontend metrics. Per this year's post on a unified theory of web performance, it's entirely possible to model nearly every interaction in terms of a full page load (and vice versa).

What does this tell us? Well, briefly, it tells us that the interaction loop for each interaction is only part of the story. Recall the loop's phases:

- Interactive (ready to handle input)

- Receiving input

- Acknowledging input, beginning work

- Updating status

- Work ends, output displayed

- GOTO 1

Now imagine we collect all the interactions a user performs in a session (ignoring scrolling, which is nearly always handled by the browser unless you screw up), and then we divide the total set of costs incurred by the number of turns through the loop.

Since our goal is to ensure users complete each turn through the loop with low latency and low variance, we can see the colourable claim for SPA architectures take shape: by trading off some initial latency, we can reduce total latency and variance. But this also gives rise to the critique: OK, but does it work?

The answer, shockingly, seems to be "no" — at least not as practised by most sites adopting this technology over the past decade.

The web performance community should eventually come to a more session-depth-weighted understanding of metrics and goals. Still, until we pull into that station, per-page-load metrics are useful. They model the better style of app construction and represent the most actionable advice for developers. ⇐

The target that this series has used consistently has been reaching a consistently interactive ("TTI") state in less than 5 seconds on the chosen device and network baseline.

This isn't an ideal target.

First, even with today's the P75 network and device, we can aim higher (lower?) and get compelling experiences loaded and main-thread clean in much less than 5 seconds.

Second, this target was set in covnersation back in 2016 in preparation for a Google I/O talk, based on what was then possible. At the time, this was still not ambitious enough, but the impact of an additional connection shrunk the set of origins that could accomplish the feat significantly.

Lastly, P75 is not where mature teams and developers spend their effort. Instead, they're looking up the percentiles and focusing on P90+, and so for mature teams looking to really make their experiences sing, I'd happily recommend that you target 5 second TTI at P90 instead. It's possible, and on a good stack with a good team and strong management, a goal you can be proud to hit. ⇐

Looking at the P75 networks and devices may strike mature teams and managers as a sandbagged goal and, honestly, I struggle with this.

On the one hand, yes, we should be looking into the higher percentiles. But weaker goals aren't within reach for most teams today. If we moved the ecosystem to a place where it could reliably hit these limits and hold them in place for a few years, the web would stand a significantly higher chance of remaining relevant.

On the other hand, these difficulties stack. Additive error means that targeting the combination P75 network and P75 device likely puts you north of P90 in the experiential distribution, but it's hard to know. ⇐

Data-minded folks will be keenly aware that simply extrapolating from average selling price over time can lead to some very bad conclusions. For example, what if device volumes fluctuate significantly? What if, in more recent years, ASPs fluctuate significantly? Or what if divergence in underlying data makes comparison across years otherwise unreliable.

These are classic questions in data analysis, and thankfully the PC market has been relatively stable in volumes, prices, and segmentation, even through the pandemic.

As covered later in this post, mobile is showing signs of heavy divergence in properties by segment, with the high-end pulling away in both capability and price. This is happening even as global ASPs remain relatively fixed, due to the increases in low-end volume over the past decade. Both desktop and mobile are staying within a narrow Average Selling Price band, but in both markets (though for different reasons), the P75 is not where folks looking only at today's new devices might expect it to be.

In this way, we can think of the Performance Inequality Gap as being an expression of Alberto Cairo's visual data lessons: things may look descriptively similar at the level of movement of averages between desktop and mobile, but the underlying data tells a very different story. ⇐

Apple Is Not Defending Browser Engine Choice

Gentle reader, I made a terrible mistake. Yes, that's right: I read the comments on a MacRumors article. At my age, one knows better. And yet.

As penance for this error, and for being short with Miguel, I must deconstruct the ways Apple has undermined browser engine diversity. Contrary to claims of Apple partisans, iOS engine restrictions are not preventing a "takeover" by Chromium — at least that's not the primary effect. Apple uses its power over browsers to strip-mine and sabotage the web, hurting all engine projects and draining the web of future potential.

As we will see, both the present and future of browser engine choice are squarely within Cupertino's control.

Apple's Long-Standing Policies Are Anti-Diversity

A refresher on Apple's iOS browser policies:

- From iOS 2.0 in '08 to iOS 14 in late '20, Apple would not allow any browser but Safari to be the default.

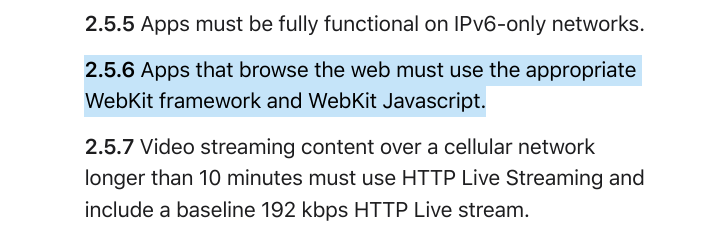

- For 14 years and counting, Apple has prevented competing browsers from bringing their own engines, forcing vendors to build skins over Apple's WebKit binary, which has historically been slower, less secure, and lacking in features.

- Apple will not even allow competing browsers to provide different runtime flags to WebKit. Instead, Fruit Co. publishes a paltry set of options that carry an unmistakable odour of first-party app requirements.

- Apple continues to self-preference through exclusive API access for Safari; e.g., the ability to install PWAs to the home screen, implement media codecs, and much else.

Defenders of Apple's monopoly offer hard-to-test claims, but many boil down to the idea that Apple's product is inferior by necessity. This line is frankly insulting to the good people that work on WebKit. They're excellent engineers; some of the best, pound for pound, but there aren't enough of them. And that's a choice.

"WebKit couldn't compete if it had to."

Nobody frames it precisely this way; instead they'll say, if WebKit weren't mandated, Chromium would take over,

or Google would dominate the web if not for the WebKit restriction.

That potential future requires mechanisms of action — something to cause Safari users to switch. What are those mechanisms? And why are some commenters so sure the end is nigh for WebKit?

Recall the status quo: websites can already ask iOS users to download alternative browsers. Thanks to (belated) questioning by Congress, they can even be set as the user's default, ensuring a higher probability to generate search traffic and derive associated revenue. None of that hinges on browser engine choice; it's just marketing. At the level of commerce, Apple's capitulation on default browser choice is a big deal, but it falls short of true differentiation.

So, websites can already put up banners asking users to get different browsers, If WebKit is doomed, its failure must lie in other stars; e.g., that Safari's WebKit is inferior to Gecko and Blink.

But the quality and completeness of WebKit is entirely within Apple's control.

Past swings away from OS default browsers happened because alternatives offered new features, better performance, improved security, and good site compatibility. These are properties intrinsic to the engine, not just the badge on the bonnet. Marketing and distribution have a role, but in recent browser battles, better engines have powered market shifts.

To truly differentiate and win, competitors must be able to bring their own engines. The leads of OS incumbents are not insurmountable because browsers are commodities with relatively low switching costs. Better products tend to win, if allowed, and Apple knows it.

Apple's prohibition on iOS browser engine competition has drained the potential of browser choice to deliver improvements. Without the ability to differentiate on features, security, performance, privacy, and compatibility, what's to sell? A slightly different UI? That's meaningful, but identically feeble web features cap the potential of every iOS browser. Nobody can pull ahead, and no product can offer future-looking capabilities that might make the web a more attractive platform.

This is working as intended:

On OSes with proper browser competition, sites can recommend browsers with engines that cost less to support or unlock crucial capabilities. Major sites asking users to switch can be incredibly effective in aggregate. Standards support is sometimes offered as a solution, but it's best to think of it as a trailing indicator.1 Critical capabilities often arrive in just one engine to start with, and developers that need these features may have incentive to prompt users to switch.

Developers are reluctanct to do this, however; turning away users isn't a winning growth strategy, and prompting visitors to switch is passé.

Still, in extremis, missing features and the parade of showstopping bugs render some services impossible to deliver. In these cases, suggesting an alternative beats losing users entirely.

But what if there's no better alternative? This is the situation that Apple has engineered on iOS. Cui bono? — who benefits?

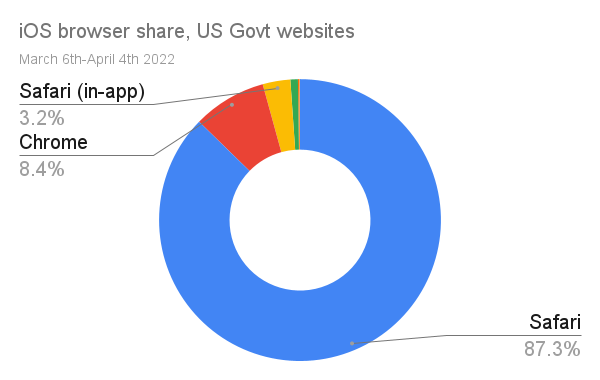

All iOS browsers present as Safari to developers. There's no point in recommending a better browser because none is available. The combined mass of all iOS browsing pegged to the trailing edge means that folks must support WebKit or decamp for Apple's App Store, where it hands out capabilities like candy, but at a shocking price.

iOS's mandated inadequacy has convinced some that when engine choice is possible, users will stampede of away from Safari. This would, in turn, cause developers to skimp on testing for Apple's engine, making it inevitable that browsers based on WebKit and other minority engines could not compete. Or so the theory goes.

But is it predestined?

Perhaps some users will switch, but browser market changes take a great deal of time, and Apple enjoys numerous defences.

To the extent that Apple wants to win developers and avoid losing users, it has plenty of time.

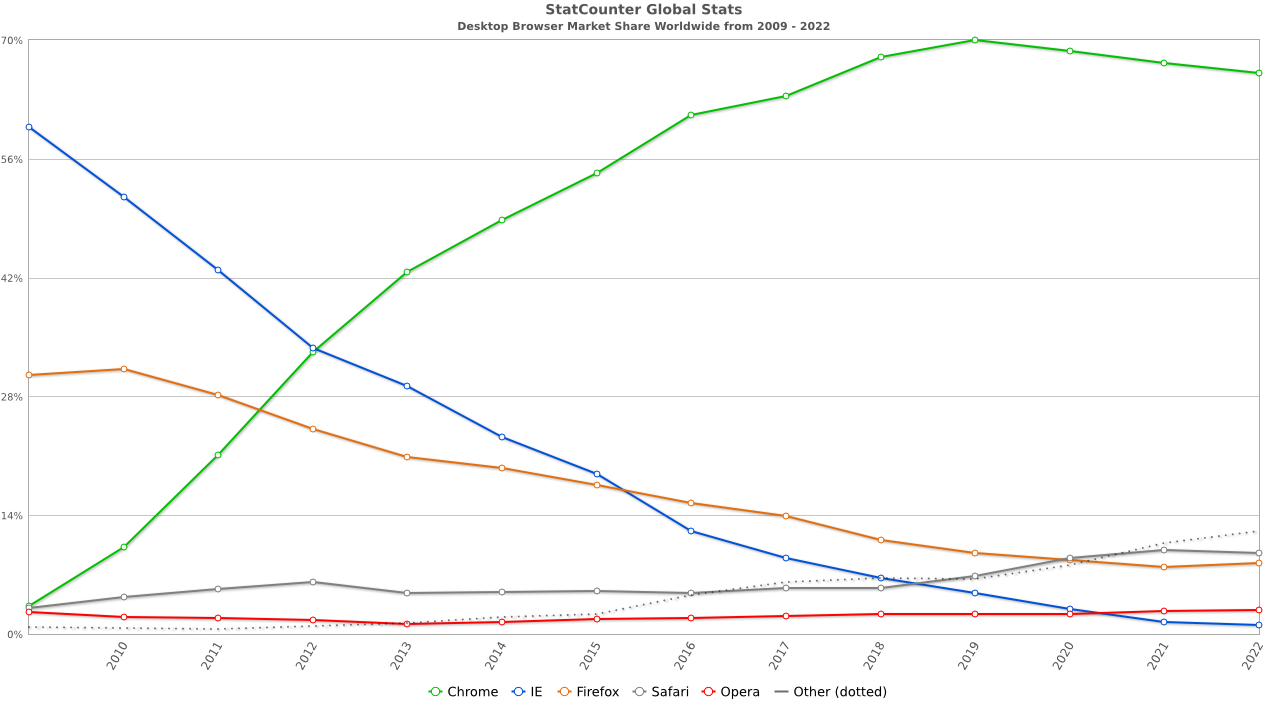

It took over five years for Chrome to earn majority share on Windows with a superior product, and there's no reason to think iOS browser share will move faster. Then there's the countervailing evidence from macOS, where Safari manages to do just fine.

Regulatory mandates about engine choice will also take more than a year to come into force, giving Apple plenty of time to respond and improve the competitiveness of its engine. And that's the lower bound.

Apple's pattern of malaicious compliance will likely postpone true choice even futher. As Apple fights tooth-and-nail to prevent alternative browser engines, it will try to create ambiguity about vendor's ability to ship their best products worldwide, potentially delaying high-cost investment in ports with uncertain market reach.

Cupertino may also try to create arduous processes that force vendors to individually challenge the lack of each API, one geography at a time. In the best case, time will still be lost to this sort of brinksmanship. This is time that Apple can use to improve WebKit and Safari to be properly competitive.

Why would developers recommend alternatives if Safari adds features, improves security, prioritises performance, and fumigates for showstopping bugs? Remember: developers don't want to prompt users to switch; they only do it under duress. The features and quality of Safari are squarely in Apple's control.

So, given that Apple has plenty of time to catch up, is it a rational business decision to invest enough to compete?

Browsers Are Big Business

Browsers are both big business and industrial-scale engineering projects. Hundreds of folks are needed to implement and maintain a competitive browser with specialisations in nearly every area of computing. World-class experts in graphics, networking, cryptography, databases, language design, VM implementation, security, usability (particularly usable security), power management, compilers, fonts, high-performance layout, codecs, real-time media, audio and video pipelines, and per-OS specialisation are required. And then you need infrastructure; lots of it.

How much does all of this cost? A reasonable floor comes from Mozilla's annual reports. The latest consolidated financials (PDF) are from 2020 and show that, without marketing expenses, Mozilla spends between $380 and $430 million US per year on software development. Salaries are the largest category of these costs (~$180-210 million), and Mozilla economises by hiring remote employees paid local market rates, without large bonuses or stock-based compensation.

From this data, we can assume a baseline cost to build and maintain a competitive, cross-platform browser at $450 million per year.

Browser vendors fund their industrial-scale software engineering projects through integrations. Search engines pay browser makers for default placement within their products. They, in turn, make a lot of money because browsers send them transactional and commercial intent searches as part of the query stream.

Advertisers bid huge sums to place ads against keywords in these categories. This market, in turn, funds all the R&D and operational costs of search engines, including "traffic acquisition costs" like browser search default deals.2

How much money are we talking about? Mozilla's $450 million in annual revenue comes from approximately 8% of the desktop market and negligible mobile share. Browsers are big, big business.

WebKit Is No Charity

Despite being largely open source, browsers and their engines are not loss leaders.

Safari, in particular, is wildly profitable. The New York Times reported in late 2020 that Google now pays Apple between $8-12 billion per year to remain Safari's default search engine, up from $1 billion in 2014. Other estimates put the current payments in the $15 billion range. What does this almighty torrent of cash buy Google? Searches, preferably of the commercial intent sort.

Mobile accounts for two-thirds of web traffic (or thereabouts), making outsized iOS adoption among wealthy users particularly salient to publishers and advertisers. Google's payments to Apple are largely driven by the iPhone rather than its niche desktop products where effective browser competition has reduced the influence of Apple's defaults.

Even with Apple's somewhat higher salaries per engineer, the skeleton staffing of WebKit, combined with the easier task of supporting fewer platforms, suggests that Apple is unlikely to spend considerably more than Mozilla does on browser development. In 2014, Apple would have enjoyed a profit margin of 50% if it had spent half a billion on browser engineering. Today, that margin would be 94-97%, depending on which figure you believe for Google's payments.

In absolute terms, that's more profit than Apple makes selling Macs.

Compare Cupertino's 3-6% search revenue reinvestment in the web with Mozilla's near 100% commitment, then recall that Mozilla has consistently delivered a superior engine to more platforms. I don't know what's more embarrassing: that some folks argue with a straight face that Apple is trying hard to build a good browser, or that it is consistently overmatched in performance, security, and compatibility by a plucky non-profit foundation that makes just ~5% of Apple's web revenue.

Choices, Choices

Steve Jobs launched Safari for Windows in the same WWDC keynote that unveiled the iPhone.

Commenters often fixate on the iPhone's original web-based pitch, but don't give Apple stick for reducing engine diversity by abandoning Windows three versions later.

Today, Apple doesn't compete outside its home turf, and when it has agency, it prevents others from doing so. These are not the actions of a firm that is consciously attempting to promote engine diversity. If Apple is an ally in that cause, it is only by accident.

Theories that postulate a takeover by Chromium dismiss Apple's power over a situation it created and recommits to annually through its budgeting process.

This is not a question of resources. Recall that Apple spends $85 billion per year on stock buybacks3, $15 billion on dividends, enjoys free cash flow larger than the annual budgets of 47 nations, and retain tens of billions of dollars of cash on hand.4 And that's to say nothing of Apple's $100+ billion in non-business-related long-term investments.

Even if Safari were a loss leader, Apple would be able to avoid producing a slower, stifled, less secure, famously buggy engine without breaking the bank.

Apple needs fewer staff to deliver equivalent features because Safari supports fewer OSes. The necessary investments are also R&D expenses that receive heavy tax advantages. Apple enjoys enviable discounts to produce a credible browser, but refuses to do so.

Unlike Microsoft's late and underpowered efforts with IE 7-11, Safari enjoys tolerable web compatibility, more than 90% share on a popular OS, and an unheard-of war chest with which to finance a defence. The postulated apocalypse seems far away and entirely within Apple's power to forestall.

Recent Developments

One way to understand the voluntary nature of Safari's poor competitiveness is to put Cupertino's recent burst of effort in context.

When regulators and legislators began asking questions in 2019, a response was required. Following Congress' query about default browser choice, Apple quietly allowed it through iOS 14 (however ham-fistedly) the following year. This underscores Apple's gatekeeper status and the tiny scale of investment required to enable large changes.

In the past six months, the Safari team has gone on a veritable hiring spree. This month's WWDC announcements showcased returns on that investment. By spending more in response to regulatory pressure, Apple has eviscerated notions that it could not have delivered a safer, more capable, and competitive browser many years earlier.

Safari's incremental headcount allocation has been large compared to the previous size of the Safari team, but in terms of Apple's P&L, it's loose change. Predictably, hiring talent to catch up has come at no appreciable loss to profitability.

The competitive potential of any browser hinges on headcount, and Apple is not limited in its ability to hire engineering talent. Recent efforts demonstrate that Apple has been able to build a better browser all along and, year after year, chose not to.

How Apple Gutted Mozilla's Chances

For over a dozen years, setting any browser other than Safari as the iOS default was impossible. This spotted Safari a massive market share head-start. Meanwhile, restrictions on engine choice continue to hamstring competitors, removing arguments for why users should switch. But don't take my word for it; here's the recent "UK CMA Final Report on Mobile Ecosystems" summarising submissions by Mozilla and others (pages 154-155):

5.48 The WebKit restriction also means that browser vendors that want to use Blink or Gecko on other operating systems have to build their browser on two different browser engines. Several browser vendors submitted that needing to code their browser for both WebKit and the browser engine they use on Android results in higher costs and features being deployed more slowly.

5.49 Two browser vendors submitted that they do not offer a mobile browser for iOS due to the lack of differentiation and the extra costs, while Mozilla told us that the WebKit restriction delayed its entrance into iOS by around seven years

That's seven years of marketing, feature iteration, and brand loyalty that Mozilla sacrificed on the principle that if they could not bring their core differentiator, there was no point.

It would have been better if Mozilla had made a ruckus, rather than hoping the world would notice its stoic virtue, but thankfully the T-rex has roused from its slumber.

Given the hard times the Mozilla Foundation has found itself in, it seems worth trying to quantify the costs.

To start, Mozilla must fund a separate team to re-develop features atop a less-capable runtime. Every feature that interacts with web content must be rebuilt in an ad-hoc way using inferior tools. Everything from form autofill to password management to content blocking requires extra resources to build for iOS. Not only does this tax development of the iOS product, it makes coordinated feature launches more costly across all ports.

Most substantially, iOS policies against default browser choice — combined with "in-app-browser" and search entry point shenanigans — have delayed and devalued browser choice.

Until late 2020, users needed to explicitly tap the Firefox icon on the home screen to get back to their browser. Naïvely tapping links would, instead, load content in Safari. This split experience causes a sort of pervasive forgetfulness, making the web less useful.

Continuous partial amnesia about browser-managed information is bad for users, but it hurts browser makers too. On OSes with functional competition, convincing a user to download a new browser has a chance of converting nearly all of their browsing to that product. iOS (along with Android and Facebook's mobile apps) undermine this by constantly splitting browsing, ignoring the user's default. When users don't end up in their browser, searches occur through it less often, affecting revenue. Web developers also experience this as a reduction in visible share of browsing from competing products, reducing incentives to support alternative engines.

A foregetful web also hurts publishers. Ad bid rates are suppressed, and users struggle to access pay-walled content when browsing is split. The conspicuious lack of re-engagement features like Push Notifications are the rotten cherry on top, forcing sites to push users to the App Store where Apple doesn't randomly log users out, or deprive publishers of key features.

Users, browser makers, web developers, and web businesses all lose. The hat-trick of value destruction.

Back Of The Napkin

The pantomime of browser choice on iOS has created an anaemic, amnesiac web. Tapping links is more slogging than surfing when autofill fails, passwords are lost, and login state is forgotten. Browsers become less valuable as the web stops being a reliable way to complete tasks.

Can we quantify these losses?

Estimating lost business from user frustration and ad rate depression is challenging. But we can extrapolate what a dozen years of choice might have meant for Mozilla from what we know about how Apple monetises the web.

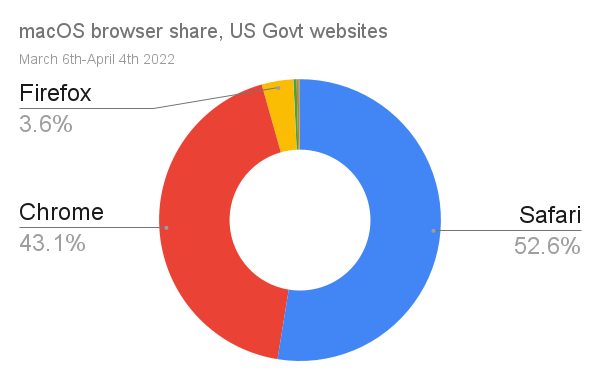

For the purposes of argument, let's assume Mozilla would be paid for web traffic at the same rate as Apple; $8-15 billion per year for ~75% share of traffic from Apple OSes.

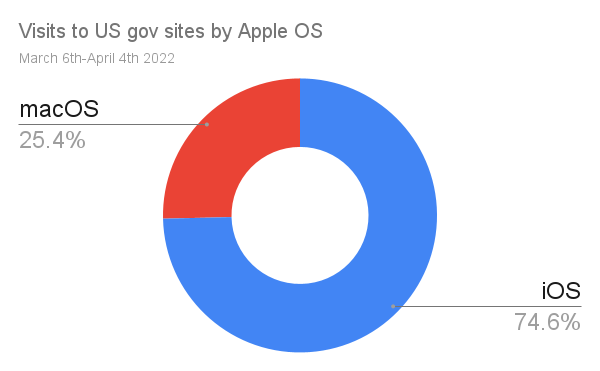

If the traffic numbers to US government websites are reasonable proxies for the iOS/macOS traffic mix (big "if"s), then equal share for Firefox on iOS to macOS would be worth $215-400 million per year.5 Put differently; there's reason to think that Mozilla would not have suffered layoffs if Apple were an ally of engine choice.

Apple's policies have made the web a less compelling ecosystem, its anti-competitive behaviours drive up competitor's costs, and it simultaneously starves them of revenue by undermining browser choice.

If Apple is a friend of engine diversity, who needs enemies?

The Best Kind Of Correct

There is a narrow, fetid sense in which Apple's influence is nominally pro-diversity. Having anchored a significant fraction of web traffic at the trailing edge, businesses that do not decamp for the App Store may feel obliged to support WebKit.

This is a malignant form of diversity, not unlike other lagging engines through the years that harmed users and web-based businesses by externalizing costs. But on OSes with true browser choice, alternatives were meaningful.

Consider the loathed memory of IE 6, a browser that overstayed its welcome by nearly a decade. For as bad as it was, folks could recommend alternatives. Plugins also allowed us to transparently upgrade the platform.

Before the rise of open-source engines, the end of one browser lineage may have been a deep loss to ecosystem diversity, but in the past 15 years, the primary way new engines emerge has been through forking and remixing.

But the fact of an engine being different does not make that difference valuable, and WebKit's differences are incremental. Sure, Blink now has a faster layout engine, better security, more features, and fewer bugs, but like WebKit, it is also derived from KHTML. Both engines are forks and owe many present-day traits to their ancestors.

Today's KHTML descendants are not the end of the story. Future forks are possible. New codebases can be built from parts. Indeed, there's already valuable cross-pollination in code between Gecko, WebKit, and Chromium. Unlike the '90s and early 2000s, diversity can arrive in valuable increments through forking and recombination.

What's necessary for leading edge diversity, however, is funding.

By simultaneously taking a massive pot of cash for browser-building off the table, returning the least it can to engine development, and preventing others from filling the gap, Apple has foundationally imperilled the web ecosystem by destroying the utility of a diverse population of browsers and engines.

Apple has agency. It is not a victim, and it is not defending engine diversity.

What Now?

A better, brighter future for the web is possible, and thanks to belated movement by regulators, increasingly likely. The good folks over at Open Web Advocacy are leading the way, clearly explaining to anyone who will listen both what's at stake and what it will take to improve the situation.

Investigations are now underway worldwide, so if you think Apple shouldn't be afraid of a bit of competition if it will help the web thrive, consider getting involved. And if you're in the UK or do business there, consider helping the CMA help the web before July 22nd, 2022. The future isn't written yet, and we can change it for the better.

FOOTNOTES

Many commenters come to debates about compatibility and standards compliance with a mistaken view of how standards are made. As a result, they perceive vendors with better standards conformance (rather than content compatibility) to occupy a sort of moral high ground. They do not. Instead, it usually represents a broken standards-setting process.

This can happen for several reasons. Sometimes standards bodies shutter, and the state of the art moves forward without them. This presents some risk for vendors that forge ahead without the cover of an SDO's protective IP umbrella, but that risk is often temporary and measured. SDOs aren't hard to come by; if new features are valuable, they can be standardised in a new venue. Alternatively, vendors can renovate the old one if others are interested in the work.

More often, working groups move at the speed of their most obstinate participants, uncomfortably prolonging technical debates already settled in the market and preventing definitive documentation of the winning design. In other cases, a vendor may play games with intellectual property claims to delay standardisation or lure competitors into a patent minefield (as Apple did with Touch Events).