Reading List

The most recent articles from a list of feeds I subscribe to.

Neither artificial, nor intelligent

Large Language Models (LLMs) and tools based on them (like ChatGPT) are all some in tech talk about today. I struggle with the optimism I see from businesses, the haphazard deployment of these systems and the seemingly ever-expanding boundaries of what we are prepared to call “artificially intelligent”. I mean, they bring interesting capabilities, but arguably they are neither artificial, nor intelligent.

Atificial intelligence, as a field, isn't easily defined. There's many different things that fall under the umbrella. It attracts people with a wide range of interests. And it has all sorts of applications, from physical robots to neural networks and natural language processing. Today, there is a lot of hype around Language Models (LMs), a specific technique in the field of “artifical intelligence”, which Emily Bender and colleagues define as ‘systems trained on string prediction tasks’ in their paper ‘On the dangers of stochastic parrots: can language models be too big?’ (one of the co-authors was Timnit Gebru, who had to leave her AI ethics position at Google over it). Hype isn't new in tech, and many recognise the patterns in vague and overly optimistic thoughtleadership (‘$thing is a bit like when the printing press was invented’, ‘if you don't pivot your business to $thing ASAP, you'll miss out’). Beyond the hype, it's essential to calm down and understand two things: do LLMs actually constitute AI and are what sort of downsides could they pose to people?

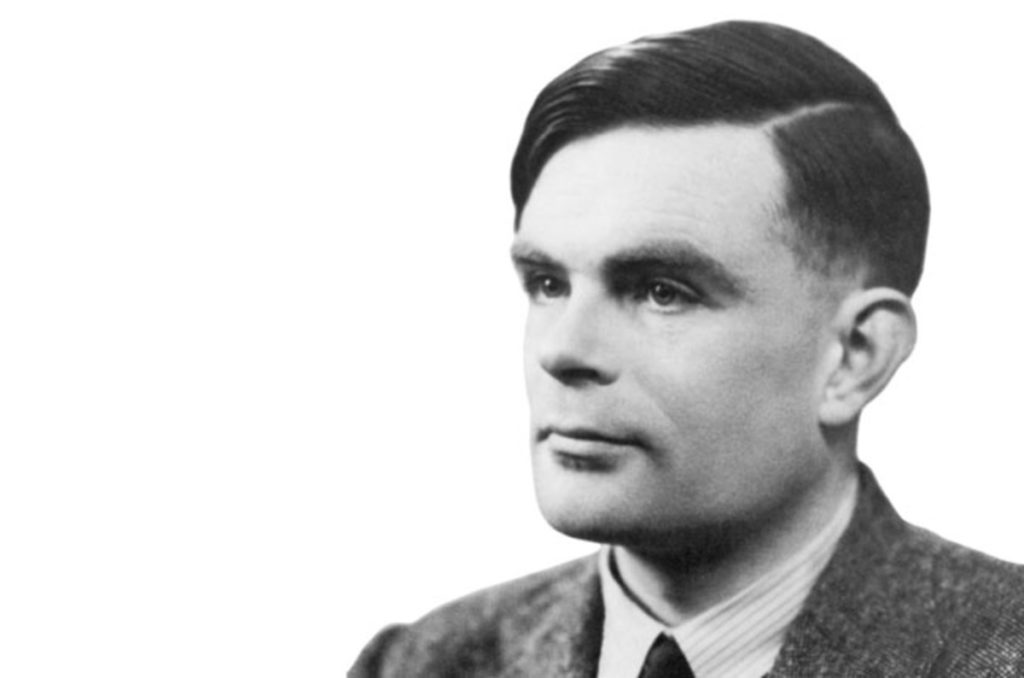

Artificial intelligence, in one of its earliest definitions, is the study of things that are in language indistinguishable from humans. In 1950, Alan Turing famously proposed an imitation game as a test for this indistinguishability. More generally, AIs are systems that think or act like humans, or that think or act rationally. According to many, including OpenAI, the company behind ChatGPT and Whisper, large language models are AI. But that's a company: a non-profit with a for-profit subsidiary—of course they would say that.

Not artificial

In one sense, “artificial” in “AI” means non-human. And yes, of course, LLMs are non-human. But they aren't artificial in the sense that their knowledge has clear, non-artificial origins: the input data that they are trained with.

OpenAI stopped disclosing openly where they get their data since GPT-3 (how Orwellian). But it is clear that they gather data from all over the public web, places like Reddit and Wikipedia. Earlier they used filtered data

from Common Crawl.

First, there is the long term consequences for quality. If this tooling results in more large scale flooding the web with AI generated content, and it uses the contents of that same web to continue training the models, it could result in a “misinformation shitshow”. It also seems like a source that can dry up once people stop asking questions on the web to interact directly with ChatGPT.

Second, it seems questionable to build off the fruits of other people's work. I don't mean off your employees, that's just capitalism—I mean other people that you scrape input data from without their permission. It was controversial when search engines took the work from journalists, this is that on steroids.

Not intelligent

What about intelligence? Does it make sense to call LLMs and the tools based on them intelligent?

Alan Turing

Alan Turing

Alan Turing suggested (again, in 1950) that machines can be said to think if they manage to trick humans such that ‘an average interrogator will not have more than 70 per cent chance of making the right identification after five minutes of questioning’. So maybe he would have regarded ChatGPT as intelligent? I guess someone familiar with ChatGPT's weaknesses could easily ask the right questions and identify it as non-human within minutes. But maybe it's good enough already to fool average interrogators? And a web flooded with LLM-generated content would probably fool (and annoy) us all.

Still, I don't think we can call bots that use LLMs intelligent, because they lack intentions, values and a sense of the world. The sentences systems like ChatGPT generate today merely do a very good job at pretending.

The Stochastic Parrots paper (SP) explains why pretending works:

our perception of natural language text, regardless of how it was generated, is mediated by our own linguistic competence

(SP, 616)

We merely interpret LLMs as coherent, meaningful and intentional, but it's really an illusion:

an LM is a system for haphazardly stitching together sequences of linguistic forms it has observed in its vast training data, according to probabilistic information about how they combine, but without any reference to meaning: a stochastic parrot.

(SP, 617)

They can make it seem like you are in a discussion with an intelligent person, but let's be real, you aren't. The system's replies to your chat prompt aren't the result of understanding or learning (even if the technical term for the process of training these models is ‘deep learning’).

But GPT-4 can pass standardised exams! Isn't that intelligent? Arvind Narayanan and Sayash Kapoor explain the challenge of standardised exams happens to be one large language models are good at by nature:

professional exams, especially the bar exam, notoriously overemphasize subject-matter knowledge and underemphasize real-world skills, which are far harder to measure in a standardized, computer-administered way

Still great innovation?

I am not too sure. I don't want to spoil any party or take away useful tools from people, but I am pretty worried about large scale commercial adoption of LLMs for content creation. It's not just that people can now more easily flood the web with content they don't care about just to increase their own search engine positions. Or that the biases in the real world can now propagate and replicate faster with less scrutiny (see SP 613, which shows how this works and suggests more investment in curating and documenting training data). Or that criminals use LLMs to commit crimes. Or that people may use it for medical advice and the advice is incorrect. In Taxonomy of Risks Posed by Language Models, 21 risks are identified. It's lots of things like that, where the balance is off between what's useful, meaningful, sensible and ethical for all on the one hand, and what can generate money for the few on the other. Yes, both sides of that balance can exist at the same time, but money often impacts decisions.

And that, lastly, can lead to increased inequity. Monetarily, e.g. what if your doctor's clinic offers consults with an AI by default, but you can press 1 to pay €50 to speak to a human (as Felienne Hermans warned Volkskrant readers last week)? And also in terms of the effect of computing on climate change: most large language models benefit those who have the most, while their effect (on climate change) threatens marginalised communities (see SP 612).

Wrapping up

I am generally very excited about applications of AI at large, like in cancer diagnosis, machine translation, maps and certain areas of accessibility. And even of capabilities that LLMs and tools based on them bring. This is a field that can genuinely make the world better in many ways. But it's super important to look beyond the hype and into the pitfalls. As a lot of my feed naturally have optimist technologists, I have consciously added many more critical journalists, scientists and thinkers to my social feeds. If this piques your interest, one place to start could be the Distributed AI Research Institute (DAIR) on Mastodon. I also recommend the Stochastic Parrots paper (and/or the NYMag feature on Emily Bender's work). If you have any recommend reading or watching, please do toot or email.

Originally posted as Neither artificial, nor intelligent on Hidde's blog.

Funnel 101: sharing your local developer preview with the world

🚀 Do you want to share your web server with the world without exposing your computer to the world? 🚀

If you’re like me, you love using Tailscale to create a secure and private network for your devices. But sometimes, you need to let the outside world access your web server, whether it’s for testing, hosting, or collaborating.

That’s why I’m super excited about Tailscale Funnel, a new feature that lets you route traffic from the internet to your Tailscale node. You can think of it as publicly sharing a node for anyone to access, even if they don’t have Tailscale themselves.

Tailscale Funnel is easy to set up and use. You just need to enable it in the admin console and on your node, and you’ll get a public DNS name for your node that points to Tailscale’s Funnel servers. These servers will proxy the incoming requests over Tailscale to your node, where you can terminate the TLS and serve your content.

The best part is that Tailscale Funnel is secure and private. The Funnel servers don’t see any information about your traffic or what you’re serving. They only see the source IP and port, the SNI name, and the number of bytes passing through. And they can’t connect to your nodes directly. They only offer a TCP connection, which your nodes can accept or reject.

Tailscale Funnel is currently in beta and available for all users. I’ve been using it for a while now and I’m blown away by how simple and powerful it is. It’s like having your own personal cloud service without any hassle or cost.

GitHub Copilot for the Command Line is amazing!

Hyper key

I started using a hyper key on macOS. A hyper key is a single key mapped to Shift + Ctrl + Opt + Cmd. Since this isn’t exactly practical to pull off with your fingers, apps don’t use this combination for built-in shortcuts. This means you have a layer for custom shortcuts without worrying about clashes.

My hyper key is mapped to Caps Lock. I actually already use caps lock as an escape key. Less travel than reaching for Esc with your pinky! Thanks to Karabiner Elements and Brett Terpstra’s guide I was able to remap it as Esc and a hyper key.

When I give Caps Lock a short tap, it functions as Esc. If I hold it and press another key, it functions as a hyper key, Shift + Ctrl + Opt + Cmd.

I still have a lot of room to map to, but for now I’m using my hyper key for a few global application shortcuts like Quick Entry in Things. In the future, I’m planning to map more Raycast commands too.

Protos

This is a work of fiction. Names, characters, business, events and incidents are the products of the author’s imagination. Any resemblance to actual persons, living or dead, or actual events is purely coincidental.

One day, Jeff stretched at his desk while he was puzzling out the problem his product manager had thrust upon him. It was an emergency, as usual. The login form had the wrong color at the wrong place, and it was causing people to look at the login form then run away in terror.

Or something like that, they just wanted the position of the login button changed so it was under the password box instead of next to it. That should be easy, right?

No. That login form was created by Palima, the person that Jeff had signed off on hiring in the last episode. Ae was absolute force of nature that had single-handedly written half of the missing code in the monolith, and wrote code that was an absolute work of art, but was absolutely impenetrable to anyone trying to modify it. As always, Palima was busy doing god-knows-what and couldn't help with this task that ae felt was beneath aer.

Hiring more people to help with this? Impossible. Headcount was hard to come by due to the recent fad of pointless layoffs. Even E100, the former bastion of refusing to lay anyone off finally succumbed to the investor class pressure to "cut costs". Techaro management had followed suit. So he was left with this problem.

While Jeff was puzzling through the dense block of tokens, he took a look at his favorite news aggregator: Hacker Moose. While scrolling through the links, he saw something called "Protos". It claimed to be a tool that he could install in BS Code and then it could rewrite code to his needs.

Jeff was skeptical. This looks too easy, he thought to himself. But, it had a free trial. He hit "install" and then the commands were available. He pointed it at a personal file he used to learn Palima's HypeScript style, then asked it to refactor a function to take an attribute set instead of normal arguments. Kinda like this:

const fooBar = (bar: number, baz: number) => {

return bar + baz;

};

To something like this:

interface fooBarArgs {

bar: string;

baz: string;

}

const fooBar = ({bar, baz}) => {

return bar + baz;

};

And then it automatically fixed the rest of the code to match that. Protos was the real deal. Jeff stopped in his tracks and really looked at what was going on. He just did something that he'd spent hours doing manually in seconds.

Jeff immediately pointed Protos at the login form issue, described the change to make, and it started auto-completing the solution. All of the things that Jeff had struggled on for months started to fade away and the solution basically wrote itself.

Jeff was flabbergasted. Just in time for his calendar to fire a reminder that his standup meeting was about to start. He walked over to the lunch area and asked the barista to make him his usual: a double shot latte a-le sirop d'érable. With his cup in hand, he walked over to where his team was standing and started small talk.

Palima was present in the office today, she had her keyboard mounted to her hips and was obviously writing into some smart glasses of some kind. Jeff waved to aer and ae looked up and yawned. "'morning"

"Good afternoon Palima, what're you working on today?"

"Fixing the database. There were problems. It's all better now."

Jeff shuddered at the idea of what the "fix" entailed, but time hit and the manager Ariel spoke up: "Good afternoon everyone! What are you working on, and what did you get done? I've got a lot of 1:1 meetings with many wonderful people today, but I'm happy with our progress in the sprint. Palima, you go next."

"There was an issue with corrupt data being written to the database due to an off-by-one error in encoding JSON. I fixed it, and all the data. We don't have to worry, and this fixes the whyOS app without having to wait for an update to be rejected. Jeff, how're you doing?"

Jeff took a moment to process that and cleared his throat. "I figured out what was wrong with the login form, and I have a PR open for review. Today I'm gonna refactor that code so it's less of a nightmare to deal with in the future."

The standup meeting continued, and nothing of note was really brought up. Jeff walked back to his desk and his manager stopped him on the way back.

"Hey, you really got it done? I thought you estimated a whole week for that."

"I figured it out, estimates are just estimates. This code is really complicated."

Ariel seemed to accept that and started to walk back to his desk. "Congrats though, I've got some more things on the backlog if you want to pick up a few more tickets."

Jeff nodded and walked to his desk. The OurWork that Techaro rented was bubbling with activity like it usually did around lunchtime, but Jeff wasn't hungry today. He was curious.

He made it back to his laptop and opened up BS Code again. The Protos extension had installed a button in the lower right hand of the screen. It was pulsing slightly, beckoning his attention.

He opened up one of the tickets Ariel had talked about and found the bit of code. He described the problem and the changes that needed to be made to Protos, and the logo spun around a bit, then the changes wrote themselves. This was the real deal.

Jeff suddenly became terrified when he realized the power of this technology. He had to be careful with this. He couldn't tell anyone about this and went over to flag the story on Hacker Moose as spam.

This could put him out of a job. He was shaking at his desk when Palima walked over and clicked happily. Jeff looked over at aer and thought he saw something funny but stopped thinking about it. "What's up?"

"Your code change was perfect. It's approved. Feel free to deploy it when you're ready."

Jeff nodded and thanked Palima, then put on his noise-cancelling headphones and hit the merge button. The login form was deployed, peace was brought to the land and product was finally happy for about 20 minutes.

Protos had claimed its first victim. Jeff was supercharged by Protos. It was almost so easy that it wasn't fun. Jeff worked on a few tickets and decided to keep the branches locally so he could release one or two changes per day. Just enough to look like he was working, not enough that it would look suspicious.

Ariel was suspicious though. He also read Hacker Moose and was skeptical that Jeff could have figured out Palima's code so quickly. He was a bit of a developer himself, so he took a look at one of the backlog tickets and fired up Protos to implement a fix.

It took seconds.

Ariel put it up for code review and Jeff was on alert instantly. He didn't know what to do.

Ariel shrugged and continued over to his meeting with the product team. He wanted to show them this neat tool he had found.

The product team was shocked by this discovery. If the product team could just implement things themselves, they wouldn't need any developers at all! Product started using Protos and was able to submit PRs for code review. Jeff was mortified when he saw this get brought up in a meeting.

Eventually, the product team managed to replace everyone but Palima and Jeff on the developer team with Protos. Features kept coming faster and faster, and they were left to pilot a ship that was growing more and more complicated without any way to stop it.

Then Techaro acquired Protos and made it a proprietary internal tool.

Techaro was unstoppable, sending people to Mars, finally solving the secret to self-driving cars, and eventually curing cancer. All without paying more than 150 developers world-wide to review the mad hallucinations of a machine. They were taking over the world, disrupting the government industry, and then

Jeff woke up at his desk. He must have dozed off. The calendar reminder popped up on his screen, reminding him of his standup. The login form wasn't fixed yet. Hacker Moose didn't have a product named Protos on the frontpage. The domain he remembered from his dream didn't resolve.

Jeff sleepily walked over to his standup and grabbed a coffee. The standup was uneventful but at the end Palima spoke up. Ae said "By the way, has anyone tried using ChatGPT yet? It's pretty cool, and it can write code for you. You just have to describe what you want."

Jeff screamed.