Reading List

The most recent articles from a list of feeds I subscribe to.

Patterns

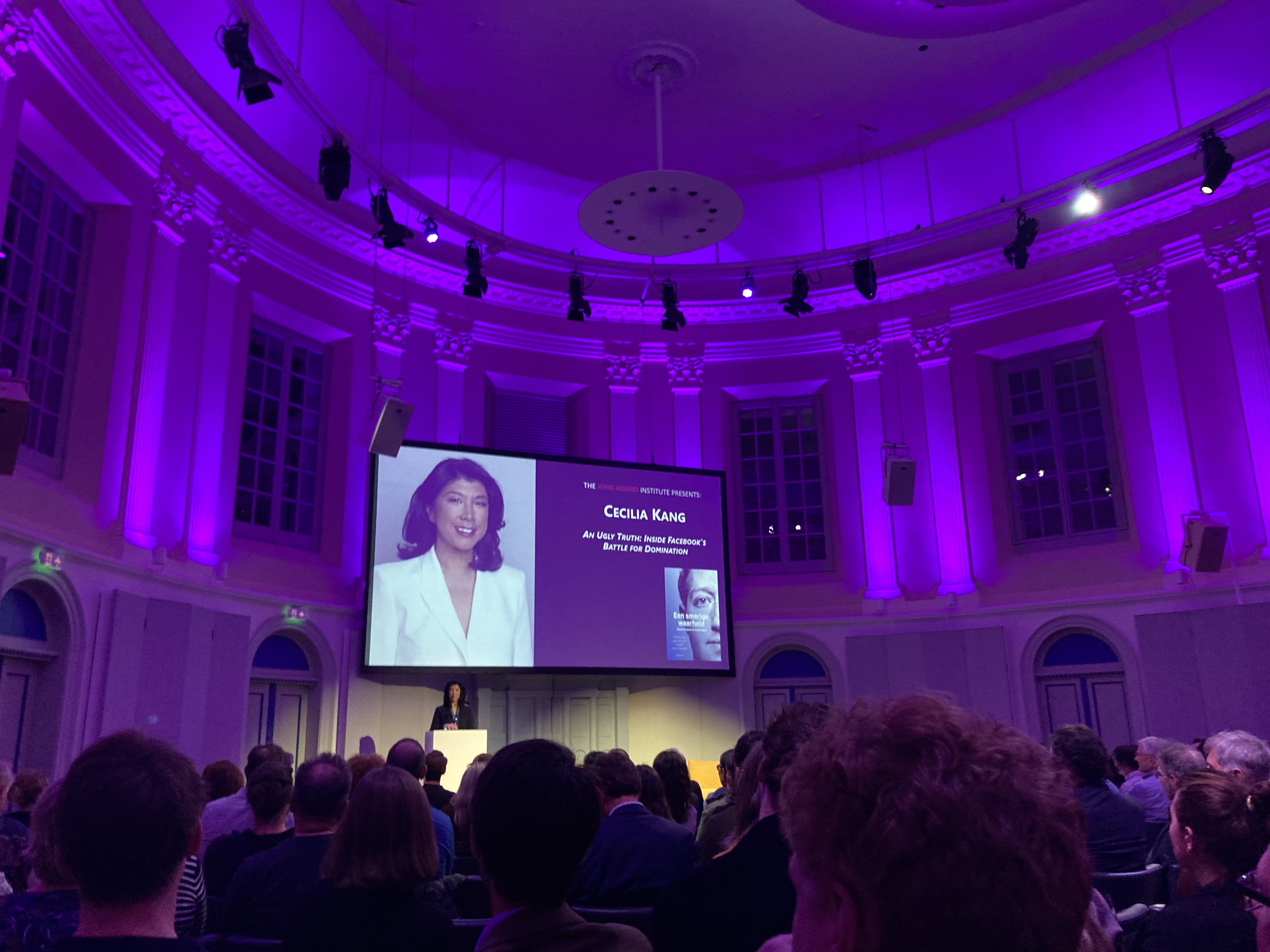

Last week, I attended a conversation with Cecilia Kang, the New York Times journalist who co-authored An Ugly Truth with Sheera Frankel. It was a lot about patterns in Facebook’s decisions, leadership style and press relations.

I was delighted to be back in a conference hall, and it happened to be the one where we hosted Fronteers 2009 and Mobilism 2011, the memories! These events had sponsors like Opera, Internet Explorer 8 and Blackberry. Anyway, I digress, let’s talk about Facebook.

Profit over people

Facebook wants two things, Kang emphasised: connect all people together through technology and grow their business. Sounds reasonable? Maybe, but these goals impact one another in a very specific way. Facebook sells ads. You can get most people to look at ads if the content triggers people, including when it is upsetting, hateful content. Growing business ends up growing hate. The site does bring people together, yes, but it profits most when they are upset together. And, problematically, maximising profit is the end goal, The Ugly Truth shows.

Is bad content a problem? Kind of, but most platforms have some of that and we should probably blame the authors of that content, not Facebook. Is profit a problem? Or maximising it? It isn’t that per se. All companies try to make profit, most try to make a lot. The problem is profit at the expense of people’s wellbeing and safety. This is where the tobacco and oil company comparisons come in. Facebook understands which content angers or harms people. They ran experiments with happier and less depressing newsfeeds. They knew about persecution of Rohingya people in Myanmar, fired up by a politician they gave a voice. They knew about extremists using their platform to organise storming the Capitol. They knew about the medical misinformation that undermined solutions for the global COVID-19 pandemic.

The thing is, extremism, misinformation and hate thrive on amplification, on being widely shared. Facebook’s inaction is so problematic, because it facilitates and accelerates that amplification to make more money. There would be extremists, liars and haters in a world without Facebook, but they would thrive a lot less less. They would thrive less on regular tv, and they would thrive less on a social media platform with more ethical content priorities. There are other platforms too that are based on amplification, which often have similar problems, but this is post is not about them.

The Facebook Papers, a large set of documents released by whistleblower Frances Hauben to a dozen news outlets, clearly confirm the conclusions of An Ugly Truth: Facebook knows what they amplify and don’t care enough to stop. Of course, they contract huge amounts of content moderators and mark COVID-19 information from trusted sources, but leadership decisions consistently put engagement (profit) before safety (moderating harmful content).

The Atlantic’s Adrienne LaFrance:

Again and again, the Facebook Papers show staffers sounding alarms about the dangers posed by the platform—how Facebook amplifies extremism and misinformation, how it incites violence, how it encourages radicalization and political polarization. Again and again, staffers reckon with the ways in which Facebook’s decisions stoke these harms, and they plead with leadership to do more.

And again and again, staffers say, Facebook’s leaders ignore them.

(From: ‘History Will Not Judge Us Kindly’ in The Atlantic)

The Wall Street Journal’s Facebook Files series evolves around the same conclusion in its opening words:

Facebook Inc. knows, in acute detail, that its platforms are riddled with flaws that cause harm, often in ways only the company fully understands. (…)Time and again, the documents show, Facebook’s researchers have identified the platform’s ill effects. Time and again, despite congressional hearings, its own pledges and numerous media exposés, the company didn’t fix them.

(From: ‘The Facebook Files’)

Inaction is a pattern An Ugly Truth confirms. Time after time, when Facebook leadership can choose between what’s good for people and what’s makes them more profit, they choose profit. These aren’t always at odds, but when they are, profit is consistently prioritised. And with that, the amplification of bad content.

Besides amplification of harmful content for profit, Facebook also engages in large scale data collection, as Shoshana Zuboff explained in The Age of Surveillance Capitalism. Facebook tracks even users who deactivated and had shady data sharing deals with device manufacturers.

Journalists have reported on lots and lots of serious problems related to Facebook’s priorities, some of which I linked above. This brings us to another pattern: Facebook’s press teams downsize the problems or outright deny them. They’ll call a story an absurd suggestion, only for it to turn out to be mostly accurate. They will also often point to the competition, which sometimes has similar problems. Or they attack the messenger. When Frances Hauben was testifying, Andy Stone, a Facebook PR person, shamelessly tweeted:

Just pointing out the fact that @FrancesHaugen did not work on child safety or Instagram or research these issues and has no direct knowledge of the topic from her work at Facebook.

Which is why she brought documents, as Jane Chung aptly replied. This time the press team responded childishly, the book also shows that they like to spin stories and deny truths when they see a chance.

The leadership

The book also goes into the people running Facebook and how to see the world. When I think of Mark Zuckerberg’s world view, I think about that time he called people who trusted him with their data “dumb fucks”. I hesitate to reduce a person’s world view to one quote from their past, but reading The Ugly Truth made me feel that his decisions and priorities of today still show he tends to dismiss other people’s safety.

When he addressed Georgetown University, he said he doesn’t want to ‘censor political speech’ and focused on free speech. The ideology seems to be that a president’s freedom to incite violence or spread covid misinformation, and the public’s freedom to ‘judge for themselves’, are more important than people’s safety. Everyone wants freedoms, but the question is whose freedom gets priority.

Mark Zuckerberg, Kang explained in Amsterdam, is inspired by Silicon Valley men like the controversial libertarian Peter Thiel, and not so much by what his own employees tell him. They often don’t bring important things to him, Kang said, as the company has a culture of protecting the leader. People want to be friends with him, not appear too critical. Maybe that’s the case for many CEOs, but it does make one wonder what the company had looked like under different leadership, that took inspiration from different places.

So, what now?

I think everyone gets how Facebook, Instagram, WhatsApp, React and Oculus have their merits. They can and often do bring people together. Make the world a better place. I don’t mean this sarcastically. Ok, I’m not personally so convinced of Oculus, I’m more of a real world person, but I’ll leave that for a later post. These platforms aren’t inherently bad, they bring lots of good to many people. The bad they bring sometimes merely reflects the bad that’s out there in the world. But it’s clear that Facebook’s role is often that of a megaphone, not just a mirror.

Some of the audience questions were along the lines “could we remove the bad parts of Facebook?” This is a tricky question, because of where most of the problems manifest. They aren’t in one team that messes things up, they are right in the business model that pays everyone’s salary. Removing the bad parts might not leave us with much left. There are also countless stories of people trying to “change the company from within”, which has now become a bit of a meme.

More legislative controls are expected, likely in the US and Europe and likely somewhere in the next 5 years or so. Maybe this will get us more of the good parts and less of the bad parts of Facebook.

Until, then, this is my personal list of things we can all do now:

- refuse to work at Facebook; it’s like voting, your individual vote doesn’t matter, but millions of individual votes can push the world in a clear direction, in this case one where Facebook has a hard time finding employees

- quit working at Facebook (I know, controversial), for the same reasons

- stop or reduce usage of Facebook/Meta and its products (including, dare I say it, React)

- help non-tech friends and family move away from Facebook

- stop advertising on Facebook (some companies have)

- help small business friends by building them a simple website outside Facebook’s walled garden

- read up on patterns in tech companies, check out books like An Ugly Truth and Super Pumped (on Uber)

Wrapping up

In conclusion, the issue isn’t that Facebook has harmful content or makes profit, it’s that its leadership consistently decides to prioritise their profit over preventing harm. They know they have problems, but decide against fixing them and aren’t honest about this to the press. This is why we all need a lot less Facebook in our lives, and I urge you to join the movement.

Looking back, the time of Opera, Internet Explorer 8 and Blackberry felt more innocent. The monopolies felt less harmful. I, for one, am very curious how the world will look back at today’s Facebook in ten years time. Let’s hope their patterns will have changed for good.

The post Patterns was first posted on hiddedevries.nl blog | Reply via email

Patterns

Last week, I attended a conversation with Cecilia Kang, the New York Times journalist who co-authored An Ugly Truth with Sheera Frankel. It was a lot about patterns in Facebook’s decisions, leadership style and press relations.

I was delighted to be back in a conference hall, and it happened to be the one where we hosted Fronteers 2009 and Mobilism 2011, the memories! These events had sponsors like Opera, Internet Explorer 8 and Blackberry. Anyway, I digress, let’s talk about Facebook.

Profit over people

Facebook wants two things, Kang emphasised: connect all people together through technology and grow their business. Sounds reasonable? Maybe, but these goals impact one another in a very specific way. Facebook sells ads. You can get most people to look at ads if the content triggers people, including when it is upsetting, hateful content. Growing business ends up growing hate. The site does bring people together, yes, but it profits most when they are upset together. And, problematically, maximising profit is the end goal, The Ugly Truth shows.

Is bad content a problem? Kind of, but most platforms have some of that and we should probably blame the authors of that content, not Facebook. Is profit a problem? Or maximising it? It isn’t that per se. All companies try to make profit, most try to make a lot. The problem is profit at the expense of people’s wellbeing and safety. This is where the tobacco and oil company comparisons come in. Facebook understands which content angers or harms people. They ran experiments with happier and less depressing newsfeeds. They knew about persecution of Rohingya people in Myanmar, fired up by a politician they gave a voice. They knew about extremists using their platform to organise storming the Capitol. They knew about the medical misinformation that undermined solutions for the global COVID-19 pandemic.

The thing is, extremism, misinformation and hate thrive on amplification, on being widely shared. Facebook’s inaction is so problematic, because it facilitates and accelerates that amplification to make more money. There would be extremists, liars and haters in a world without Facebook, but they would thrive a lot less less. They would thrive less on regular tv, and they would thrive less on a social media platform with more ethical content priorities. There are other platforms too that are based on amplification, which often have similar problems, but this is post is not about them.

The Facebook Papers, a large set of documents released by whistleblower Frances Hauben to a dozen news outlets, clearly confirm the conclusions of An Ugly Truth: Facebook knows what they amplify and don’t care enough to stop. Of course, they contract huge amounts of content moderators and mark COVID-19 information from trusted sources, but leadership decisions consistently put engagement (profit) before safety (moderating harmful content).

The Atlantic’s Adrienne LaFrance:

Again and again, the Facebook Papers show staffers sounding alarms about the dangers posed by the platform—how Facebook amplifies extremism and misinformation, how it incites violence, how it encourages radicalization and political polarization. Again and again, staffers reckon with the ways in which Facebook’s decisions stoke these harms, and they plead with leadership to do more.

And again and again, staffers say, Facebook’s leaders ignore them.

(From: ‘History Will Not Judge Us Kindly’ in The Atlantic)

The Wall Street Journal’s Facebook Files series evolves around the same conclusion in its opening words:

Facebook Inc. knows, in acute detail, that its platforms are riddled with flaws that cause harm, often in ways only the company fully understands. (…)Time and again, the documents show, Facebook’s researchers have identified the platform’s ill effects. Time and again, despite congressional hearings, its own pledges and numerous media exposés, the company didn’t fix them.

(From: ‘The Facebook Files’)

Inaction is a pattern An Ugly Truth confirms. Time after time, when Facebook leadership can choose between what’s good for people and what’s makes them more profit, they choose profit. These aren’t always at odds, but when they are, profit is consistently prioritised. And with that, the amplification of bad content.

Besides amplification of harmful content for profit, Facebook also engages in large scale data collection, as Shoshana Zuboff explained in The Age of Surveillance Capitalism. Facebook tracks even users who deactivated and had shady data sharing deals with device manufacturers.

Journalists have reported on lots and lots of serious problems related to Facebook’s priorities, some of which I linked above. This brings us to another pattern: Facebook’s press teams downsize the problems or outright deny them. They’ll call a story an absurd suggestion, only for it to turn out to be mostly accurate. They will also often point to the competition, which sometimes has similar problems. Or they attack the messenger. When Frances Hauben was testifying, Andy Stone, a Facebook PR person, shamelessly tweeted:

Just pointing out the fact that @FrancesHaugen did not work on child safety or Instagram or research these issues and has no direct knowledge of the topic from her work at Facebook.

Which is why she brought documents, as Jane Chung aptly replied. This time the press team responded childishly, the book also shows that they like to spin stories and deny truths when they see a chance.

The leadership

The book also goes into the people running Facebook and how to see the world. When I think of Mark Zuckerberg’s world view, I think about that time he called people who trusted him with their data “dumb fucks”. I hesitate to reduce a person’s world view to one quote from their past, but reading The Ugly Truth made me feel that his decisions and priorities of today still show he tends to dismiss other people’s safety.

When he addressed Georgetown University, he said he doesn’t want to ‘censor political speech’ and focused on free speech. The ideology seems to be that a president’s freedom to incite violence or spread covid misinformation, and the public’s freedom to ‘judge for themselves’, are more important than people’s safety. Everyone wants freedoms, but the question is whose freedom gets priority.

Mark Zuckerberg, Kang explained in Amsterdam, is inspired by Silicon Valley men like the controversial libertarian Peter Thiel, and not so much by what his own employees tell him. They often don’t bring important things to him, Kang said, as the company has a culture of protecting the leader. People want to be friends with him, not appear too critical. Maybe that’s the case for many CEOs, but it does make one wonder what the company had looked like under different leadership, that took inspiration from different places.

So, what now?

I think everyone gets how Facebook, Instagram, WhatsApp, React and Oculus have their merits. They can and often do bring people together. Make the world a better place. I don’t mean this sarcastically. Ok, I’m not personally so convinced of Oculus, I’m more of a real world person, but I’ll leave that for a later post. These platforms aren’t inherently bad, they bring lots of good to many people. The bad they bring sometimes merely reflects the bad that’s out there in the world. But it’s clear that Facebook’s role is often that of a megaphone, not just a mirror.

Some of the audience questions were along the lines “could we remove the bad parts of Facebook?” This is a tricky question, because of where most of the problems manifest. They aren’t in one team that messes things up, they are right in the business model that pays everyone’s salary. Removing the bad parts might not leave us with much left. There are also countless stories of people trying to “change the company from within”, which has now become a bit of a meme.

More legislative controls are expected, likely in the US and Europe and likely somewhere in the next 5 years or so. Maybe this will get us more of the good parts and less of the bad parts of Facebook.

Until, then, this is my personal list of things we can all do now:

- refuse to work at Facebook; it’s like voting, your individual vote doesn’t matter, but millions of individual votes can push the world in a clear direction, in this case one where Facebook has a hard time finding employees

- quit working at Facebook (I know, controversial), for the same reasons

- stop or reduce usage of Facebook/Meta and its products (including, dare I say it, React)

- help non-tech friends and family move away from Facebook

- stop advertising on Facebook (some companies have)

- help small business friends by building them a simple website outside Facebook’s walled garden

- read up on patterns in tech companies, check out books like An Ugly Truth and Super Pumped (on Uber)

Wrapping up

In conclusion, the issue isn’t that Facebook has harmful content or makes profit, it’s that its leadership consistently decides to prioritise their profit over preventing harm. They know they have problems, but decide against fixing them and aren’t honest about this to the press. This is why we all need a lot less Facebook in our lives, and I urge you to join the movement.

Looking back, the time of Opera, Internet Explorer 8 and Blackberry felt more innocent. The monopolies felt less harmful. I, for one, am very curious how the world will look back at today’s Facebook in ten years time. Let’s hope their patterns will have changed for good.

Originally posted as Patterns on Hidde's blog.

Subsets and supersets of WCAG

“Could you give us a checklist for accessibility, please”, is a frequently asked question to accessibility consultants. Checklists, while convenient, reduce WCAG to a subset. To maximise accessibility, we likely need a superset. This post goed into how both subsets and supersets can be helpful in their own ways.

Why WCAG

In this post, I’ll consider WCAG the baseline, as many governments and organisations do. Accessibility standards are by no means perfect, but they are essential. To get their web accessibility right at scale, organisations need a solid definition of what “success” means when building accessibly. Something to measure. Such definitions require a lot of input and perspectives. They necessarily take long. Standards like WCAG are the closest we have to that, and yes, they have gotten a wide range of input and perspectives. In other words, full WCAG reports are a great way for organisations to monitor their accessibility.

We can’t be doing full audits all the time, if only because those are best done by external auditors, outside the project team. On the other end of the spectrum, just performing WCAG audits isn’t enough. To maximise accessibility, our organisation should test with users with disabilities and include best practices beyond WCAG.

Subsets

Using subsets of WCAG, more team members can work on accessibility more often. More team members, because checklists often require less expertise, and more often, because doing a few checklists requires no planning, unlike conducting a full WCAG audit.

Why settle for less?

Accessibility standards can be daunting to use. If we commit to WCAG 2.1 Level A + AA conformance, there are 50 Success Criteria that we should check against. For every aspect (content, UI design, development etc), for every component. I often hear teams say that this too much of a burden. If we want to decide what applies when and to which team members, we’ll need to be well-versed in WCAG, or hire a consultant who is. Regardless of whether we do that (please do, regular full audits are essential), it makes sense to have some checks that anyone can perform.

Sidenote: of course, in real projects, it takes less to evaluate against the full set of WCAG Success Criteria, as not everything always applies. For instance, a component that doesn’t include any “time based media”, can assume the four Level A/AA criteria that relate to such media don’t apply. And responsibilities per Success Criterion differ too (for more background: the ARRM project works on a matrix mapping Success Criteria to roles and responsibilities).

Have checks that anyone can perform

Checks that anyone can perform don’t require special software or specialist accessibility knowledge. Examples:

- if you zoom in your browser to 400%, does the interface still work?

- can you get to all the clickable things with just the

TABkey, use them and see where you are? (this one may require some setup if you’re on a Mac) - when you click on form labels, does the input gain focus?

So that’s one subset of WCAG. Ideally, we would pick our own for our organisation or project, based on types of websites or content (will we have lots of forms? lots of data visualisation? mostly content? super interactive? etc). Pick checks that many people can perform often.

I’ve seen this approach can be a powerful part of an accessibility strategy. You know, Conway’s Game of Life only has four rules, yet you can use it to build spaceships, gliders, Boolean logic and finite state machines… sometimes there’s power in simple rules and checks.

Supersets

With a superset of WCAG, our website can become more accessible.

It’s no secret that WCAG doesn’t cover everything and this only makes sense. Creating standards takes a lot of time, the web industry is in continuous evolution and some barriers can be put into testable criteria more easily than others. The Accessibility Guidelines Working Group (AGWG) in W3C does fantastic work on WCAG (sorry, I am biased), including to cover more and different user needs and take into account the ever-changing web. I mean, WCAG 2.* is from 2008 and the basic principles still stand after all those years.

Test with people

One of the most effective ways to find out if our work is accessible, is to test with users with disabilities, either by including them in our regular user tests, or as separate user tests.

User testing with people with disabilities is mostly similar to ‘regular’ user testing, but some things are different. In Things to consider when doing usability testing with disabled people, Peter van Grieken shares tips for recruiting participants, timing, interpretation and accomodation.

The Accessibility Project also has a list of organisations that can help with testing with users with disabilities.

Guidance beyond WCAG

There are also lots of accessibility best practices beyond WCAG, some provided by the W3C as non-normative guidance, some provided by others.

For instance, see:

- Making Content Usable for People with Cognitive and Learning Disabilities , a document filled with UX recommendations, specifically related to people with cognitive and learning disabilities, but useful for all

- XR Accessibility User Requirements for if you’re building anything “extended reality”-like, such as virtual reality and augmented reality

- Accessibility Requirements for People with Low Vision on how to make web content accessible to people with low vision

- GOV.UK accessibility blog, where the folks behind GOV.UK share stories from their accessibility practice, tests they’ve done and more

- Scott O’Hara’s Accessible Components

Summing up

Many use WCAG as a baseline to ensure web accessibility. This matters a lot, it is important to have regular WCAG audits done (e.g. yearly). In this post, we looked at what we can do beyond that, when we use subsets and supersets of the standard. Subsets can help anyone test stuff anytime, which is good for continually catching low hanging fruit. Supersets are useful to ensure you’re really building something that is accessible, by user testing and embedding guidance and best practices beyond WCAG.

Thanks to Eric Bailey, Paul van Buuren and Marjon Bakker for feedback on earlier drafts (thanks do not imply endorsement)

The post Subsets and supersets of WCAG was first posted on hiddedevries.nl blog | Reply via email

Subsets and supersets of WCAG

“Could you give us a checklist for accessibility, please”, is a frequently asked question to accessibility consultants. Checklists, while convenient, reduce WCAG to a subset. To maximise accessibility, we likely need a superset. This post goed into how both subsets and supersets can be helpful in their own ways.

Why WCAG

In this post, I’ll consider WCAG the baseline, as many governments and organisations do. Accessibility standards are by no means perfect, but they are essential. To get their web accessibility right at scale, organisations need a solid definition of what “success” means when building accessibly. Something to measure. Such definitions require a lot of input and perspectives. They necessarily take long. Standards like WCAG are the closest we have to that, and yes, they have gotten a wide range of input and perspectives. In other words, full WCAG reports are a great way for organisations to monitor their accessibility.

We can’t be doing full audits all the time, if only because those are best done by external auditors, outside the project team. On the other end of the spectrum, just performing WCAG audits isn’t enough. To maximise accessibility, our organisation should test with users with disabilities and include best practices beyond WCAG.

Subsets

Using subsets of WCAG, more team members can work on accessibility more often. More team members, because checklists often require less expertise, and more often, because doing a few checklists requires no planning, unlike conducting a full WCAG audit.

Why settle for less?

Accessibility standards can be daunting to use. If we commit to WCAG 2.1 Level A + AA conformance, there are 50 Success Criteria that we should check against. For every aspect (content, UI design, development etc), for every component. I often hear teams say that this too much of a burden. If we want to decide what applies when and to which team members, we’ll need to be well-versed in WCAG, or hire a consultant who is. Regardless of whether we do that (please do, regular full audits are essential), it makes sense to have some checks that anyone can perform.

Sidenote: of course, in real projects, it takes less to evaluate against the full set of WCAG Success Criteria, as not everything always applies. For instance, a component that doesn’t include any “time based media”, can assume the four Level A/AA criteria that relate to such media don’t apply. And responsibilities per Success Criterion differ too (for more background: the ARRM project works on a matrix mapping Success Criteria to roles and responsibilities).

Have checks that anyone can perform

Checks that anyone can perform don’t require special software or specialist accessibility knowledge. Examples:

- if you zoom in your browser to 400%, does the interface still work?

- can you get to all the clickable things with just the

TABkey, use them and see where you are? (this one may require some setup if you’re on a Mac) - when you click on form labels, does the input gain focus?

So that’s one subset of WCAG. Ideally, we would pick our own for our organisation or project, based on types of websites or content (will we have lots of forms? lots of data visualisation? mostly content? super interactive? etc). Pick checks that many people can perform often.

I’ve seen this approach can be a powerful part of an accessibility strategy. You know, Conway’s Game of Life only has four rules, yet you can use it to build spaceships, gliders, Boolean logic and finite state machines… sometimes there’s power in simple rules and checks.

Supersets

With a superset of WCAG, our website can become more accessible.

It’s no secret that WCAG doesn’t cover everything and this only makes sense. Creating standards takes a lot of time, the web industry is in continuous evolution and some barriers can be put into testable criteria more easily than others. The Accessibility Guidelines Working Group (AGWG) in W3C does fantastic work on WCAG (sorry, I am biased), including to cover more and different user needs and take into account the ever-changing web. I mean, WCAG 2.* is from 2008 and the basic principles still stand after all those years.

Test with people

One of the most effective ways to find out if our work is accessible, is to test with users with disabilities, either by including them in our regular user tests, or as separate user tests.

User testing with people with disabilities is mostly similar to ‘regular’ user testing, but some things are different. In Things to consider when doing usability testing with disabled people, Peter van Grieken shares tips for recruiting participants, timing, interpretation and accomodation.

The Accessibility Project also has a list of organisations that can help with testing with users with disabilities.

Guidance beyond WCAG

There are also lots of accessibility best practices beyond WCAG, some provided by the W3C as non-normative guidance, some provided by others.

For instance, see:

- Making Content Usable for People with Cognitive and Learning Disabilities , a document filled with UX recommendations, specifically related to people with cognitive and learning disabilities, but useful for all

- XR Accessibility User Requirements for if you’re building anything “extended reality”-like, such as virtual reality and augmented reality

- Accessibility Requirements for People with Low Vision on how to make web content accessible to people with low vision

- GOV.UK accessibility blog, where the folks behind GOV.UK share stories from their accessibility practice, tests they’ve done and more

- Scott O’Hara’s Accessible Components

Summing up

Many use WCAG as a baseline to ensure web accessibility. This matters a lot, it is important to have regular WCAG audits done (e.g. yearly). In this post, we looked at what we can do beyond that, when we use subsets and supersets of the standard. Subsets can help anyone test stuff anytime, which is good for continually catching low hanging fruit. Supersets are useful to ensure you’re really building something that is accessible, by user testing and embedding guidance and best practices beyond WCAG.

Originally posted as Subsets and supersets of WCAG on Hidde's blog.

Trying out spicy sections on here

This week I had some fun with <spicy-sections>, an experimental custom element that turns a bunch of headings and content into tabs. Or collapsibles. Or some combination of affordances, based on conditions you set.

Background

Introduced in Brian Kardell’s post Tabs in HTML?, the <spicy-sections> element is a concept from some folks in the Open UI Community Group. In this CG, we analyse UI components that are common in design systems and on the web. The goal is to find out which ones might be suitable additions to the platform, the HTML spec and browsers.

All design systems teams could come up with their own tabs and figure out semantics, accessibility, security and behaviours. But what if some (or all) of those things could be built into HTML, with browsers implementing smart defaults? A bit like the video element… as a developer you only need to feed it a video and some subtitles, and boom, your users can enjoy video. Maybe your specific website needs the play button to be pink or trigger confetti, but generally, there are quite a lot of websites where the default is just right.

The nice thing about platform defaults is also that it can be optimised for the platform that renders it: a select on iOS is different than a select on Microsoft Edge. They work well on these respective platforms. Yes, everyone would like more control over styling of selects, and Open UI is looking at that too (and yay, accent-color is a thing soon). But can we know which styles a given user needs as well as the platform can?

Why spicy sections?

Tabs are among the most frequently requested components. They are also tricky. Some of the considerations:

- meaning: tabs in your browser manage windows, tabs in a webpage manage, eh, sections? These are quite distinct.

- accessibility: with ARIA, there are at least two ways to do tabs (eg activate on focus or not?), and in some user tests I saw, users prefers ARIA-less tabs

- what about disabled tabs and user dismissable ones?

- overflow: what if not all tabs fit on the screen? scrollbars?

(The Tabs Research from Open UI CG has many more tab engineering considerations)

The overflow issue begs the question: does content displayed in tabs always belong in tabs, or might there be better controls in some situations? Maybe on small screens we need to get rid of the tabs and use collapsibles? (Maybe we need to ‘uncontrol’ them in specific cases, like print?)

This makes all the more sense if you consider tabs in web pages are really just a different way to display sections and their headings. Headings are tables of contents, after all. “Spicy sections”, then, would be a web platform feature that lets you display sections in different ways: linear, as is the default way to display sections now, as tabs or as collapsibles. You pick which based on constraints, and media queries are the way the spicy-sections demo defines those constraints.

Yes, <spicy-sections> is a demo, the element exists to explore ideas and start the conversation. Brian encourages you to share your thoughts:

Play with it. Build something useful. Ask questions, show us your uses, give us feedback about what you like or don’t. This will help us shape good and successful approaches and inform actual proposals.

As Brian says in his post: it is critical that web developers see these proposals way before they exist in browsers. So if you happen to be a front-end developer reading this… consider if you like this experiment, share your thoughts or questions, don’t feel shy to open a GitHub issue.

Four sections, but spicier

On this website I have a fairly boring page that lists some stuff I do as a freelancer: services. Each service has a h2 and a bit of content. I decided to use this for testing the spicy-sections element IRL.

To get it to work, I included SpicySections.js, a JavaScript class that defines a custom element. The element looks in your CSS for a definition of when you want which affordances. I went for this configuration:

- collapsibles when

max-width: 50em(my mobile state) matches - tabs when

min-width: 70emmatches - otherwise, just the headings and content as they are

I could set this in my CSS, using:

spicy-sections {

--const-mq-affordances:

[screen and (max-width: 50em) ] collapse |

[screen and (min-width: 70em) ] tab-bar;

}I rewrote my markup to have the following expected structure:

<spicy-sections>

<h2>Name of heading</h2>

<div>

content be here

</div>

… repeat the h2 and div for more sections

</spicy-sections>(also works with other headings; h1, h3, etc)

Note: this isn’t final syntax, it could be something else entirely, suggestions are welcomed.

Some overrides

After I had ensured my markup had the right shape, I included the SpiceSections JavaScript and I set my constraints in CSS, it worked very much as advertised. There were two things I tweaked.

Vertical tabs

With spicy-sections, you’re using headings as tab names. It seems my page has quite long headings. Horizontally, I didn’t have sufficient space, so I wanted mine to go vertical instead.

I got this to work by adding an extra affordance to the web component class, some code to set aria-orientation to vertical and a bit of CSS to make it work visually. I set white-space to normal and made spicy-sections a flex container in the row direction, for easy same height columns.

I could have done this all in CSS, except for aria-orientation, but I am unsure about the benefit of the attribute here: arrow keys for both orientations are supported by default.

Overriding a margin

In my website’s CSS, I have this line (don’t judge 😬):

*,

*::before,

*::after {

margin: 0;

padding: 0;

box-sizing: border-box;

}It somehow overwrote the right margin on the arrows that spicy-sections throws in for the collapsed view. No biggie, with CSS’s excellent cascading functionality this was easy to fix.

Nesting

I have also put some spicy-sections inside my spicy-sections: I have some ‘examples’ listed on the side on larger screens, it made sense to me to show them collapsed on smaller screens. This can be done with a combination of spicy-sections:

<spice-sections>

<h2>A section</h2>

<div>

<spicy-sections>

<h3>Oh my, I am nested!</h3>

<div/>

</spicy-sections>

</div>

…

</spicy-sections>and this CSS:

.spicy-services-examples {

--const-mq-affordances:

[screen and (max-width: 50em) ] collapse;

}So basically, I only list one affordance and it only applies to “small screens”, or what that means on this site.

Thoughts

This is all a long winded way to say: I quite like the idea of conditionally changing the appearance of sections. I think it makes sense on the web, because our sites and apps are viewed on so many different screen types and sizes (screen spanning, anyone?). Horizontal tabs are probably not always the best affordance, other affordances make sense in some situations, so a mechanism to switch between them is useful.

The way the experimental component works happens to be very styleable. When I was implementing it on my site, I felt I could make everything look exactly the way I wanted it to look. The tabs are just h2 elements and you can do whatever you want to them, using the styling language we all know and love: CSS.

Reusing media query-like syntax for defining when to use which affordances is helpful. Folks who build responsive websites will be used to them already.

There are also some uncertainties that came to my mind:

- will people get it? Wrapping some content into a

spicy-sectionssections element fits well into the way I think about content on the web, I think about markup a lot, it matters to me. I fear it could feel weird to others, especially designers and developers who aren’t very concerned with markup, or who don’t see headings as tables of contents - is this easy to author in a CMS? In theory this would work well with any CMS content, it could be used with CMSes that exist today, because the markup that is required is “just” headings and content. There may be some trickery required to wrap the content underneath each heading into a

div, and the set of sections into the right element, but that should be minor. - are there other affordances that are not in the current proposal? Maybe something that automatically generated a table of contents kind of thing, like GitHub does for READMEs?

- could it confuse users that semantics and accessibility meta data (roles, states) can change based on constraints?

Wrapping up

Thanks for reading this write-up! If you like, please play with Brian’s demo on Codepen, perhaps use spicy-sections somewhere and give feedback if you have any.

The post Trying out spicy sections on here was first posted on hiddedevries.nl blog | Reply via email